Your Cart is Empty

Customer Testimonials

-

"Great customer service. The folks at Novedge were super helpful in navigating a somewhat complicated order including software upgrades and serial numbers in various stages of inactivity. They were friendly and helpful throughout the process.."

Ruben Ruckmark

"Quick & very helpful. We have been using Novedge for years and are very happy with their quick service when we need to make a purchase and excellent support resolving any issues."

Will Woodson

"Scott is the best. He reminds me about subscriptions dates, guides me in the correct direction for updates. He always responds promptly to me. He is literally the reason I continue to work with Novedge and will do so in the future."

Edward Mchugh

"Calvin Lok is “the man”. After my purchase of Sketchup 2021, he called me and provided step-by-step instructions to ease me through difficulties I was having with the setup of my new software."

Mike Borzage

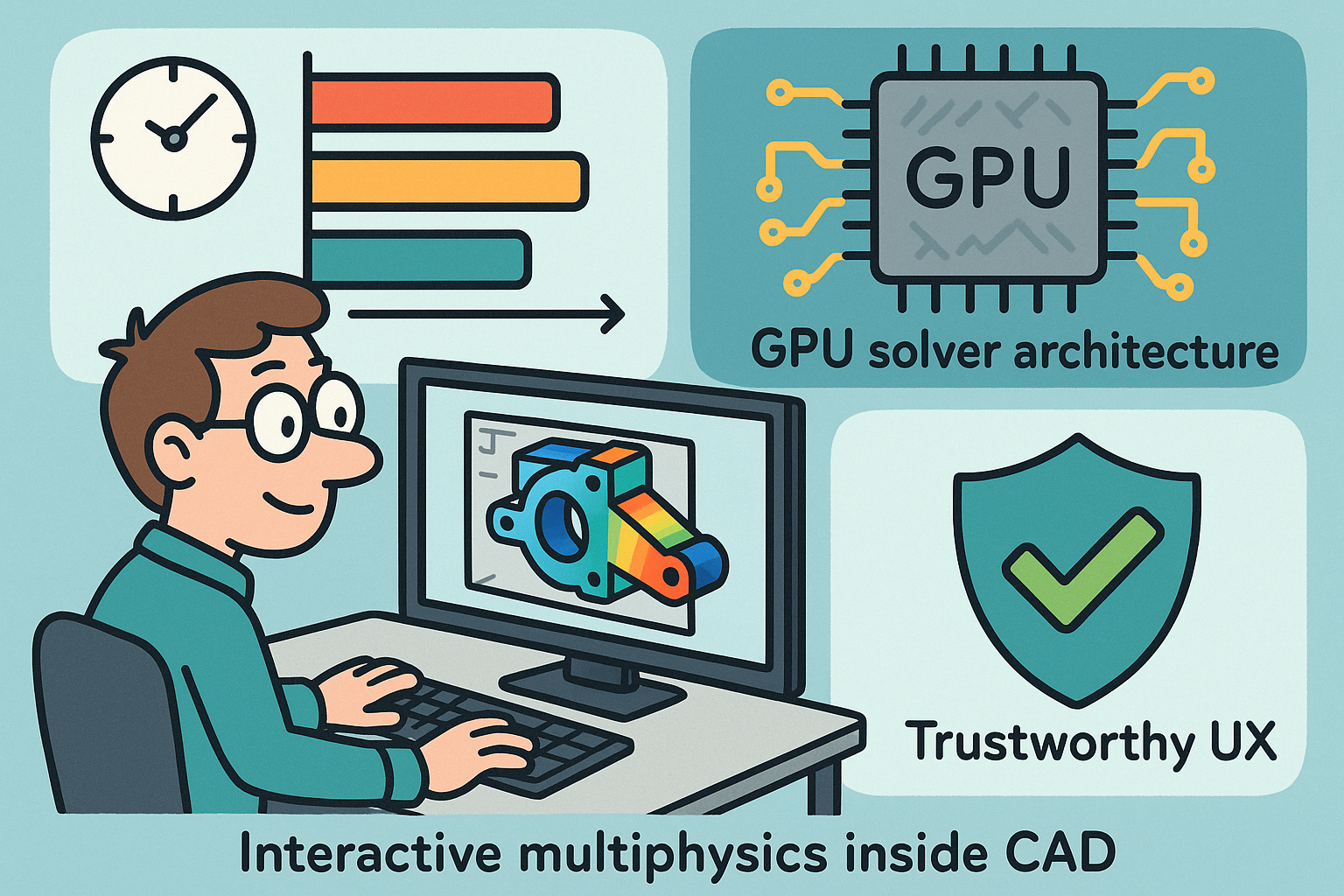

Interactive Multiphysics Inside CAD: Latency Tiers, GPU Solver Architecture, and Trustworthy UX

March 10, 2026 13 min read

Why “real-time” multiphysics inside CAD, and what it really means

Target latencies and UX tiers

“Real-time” is not a single number; it is a set of perceptual and cognitive thresholds that align solver throughput with the designer’s attention span. In a CAD viewport, three latency bands define whether the system feels like a design instrument or a batch tool with colorful distractions. Motion-driven cues such as streamlines that follow a cursor drag or stress streaks that bend under a grip-edit must refresh within the human motion-prediction window—roughly one to two refresh intervals—so sustaining 16–33 ms per frame keeps interaction continuous and intent-preserving. When the user commits a small parametric nudge—moving a fillet radius, tweaking an inlet plenum taper—sub-0.5 s updates sustain a cognitive “thread of thought,” enabling immediate cause–effect mapping. For more substantial edits—changing a topology branch, flipping a boundary condition, swapping a material—the standard for trust shifts to localized consistency rather than global completion; the system should deliver a locally converged field within 1–5 s, then progressively refine. These thresholds are the contract between physics fidelity and flow-state. They also partition compute into tiers: ultra-fast preview kernels that never block input, mid-latency insight passes that honor key conservation laws, and background refinements that raise confidence without hijacking agency. Strategy matters because “real-time” without guarantees is just animation. The aim is to deliver **engineering insight at interaction speed**, not merely pleasing motion, and that demands disciplined latency budgets tied to visible and quantitative quality-of-interest (QoI) tolerances exposed to the user.

- Visual feedback: 16–33 ms/frame to maintain continuous motion cues and stable proprioception.

- Engineering insight: under 0.5 s for incremental, parametric edits to keep mental continuity.

- Local convergence: 1–5 s after topology or boundary-condition shifts to reestablish trust anchors.

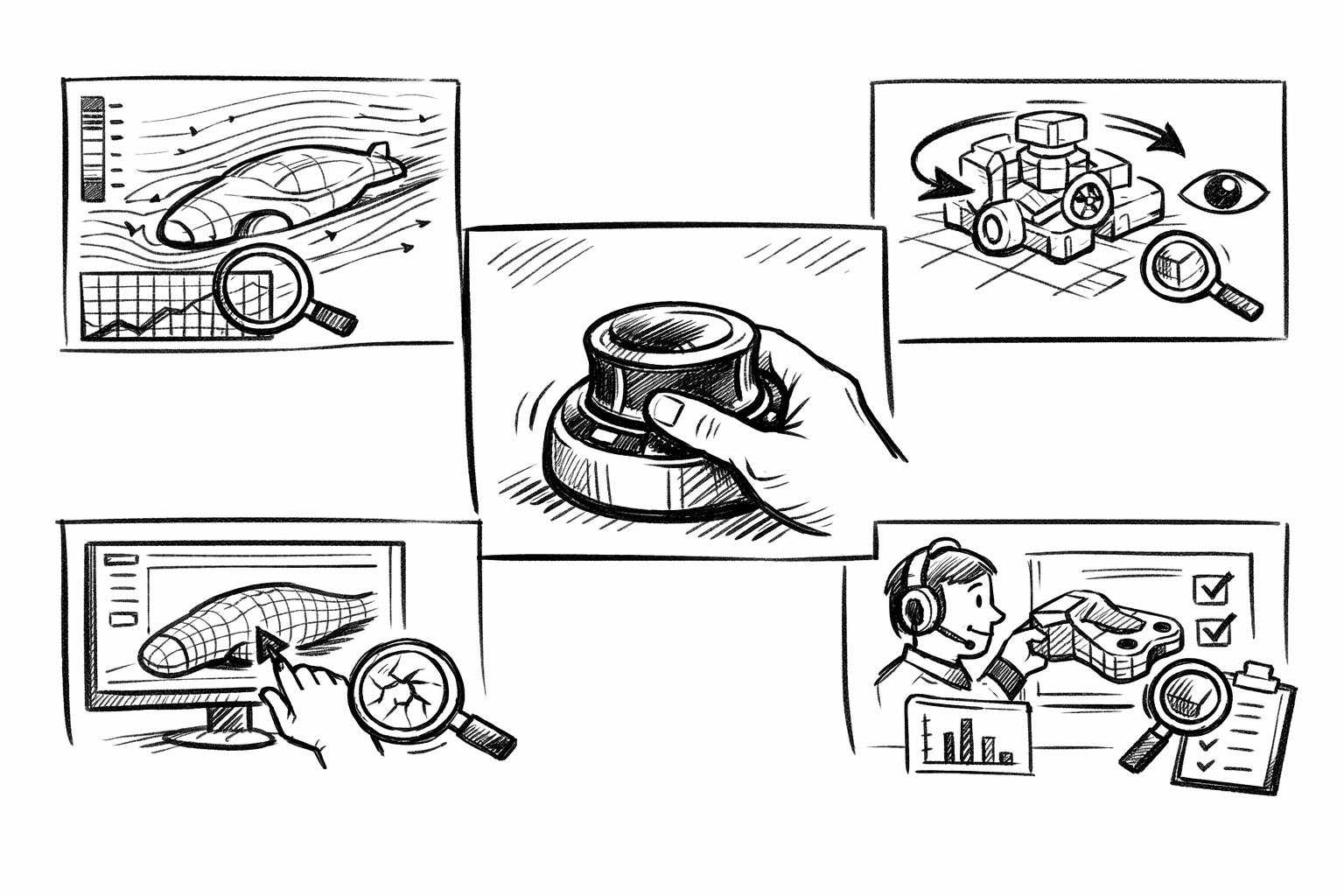

High-impact use cases

Not every physics problem demands frame-budget responsiveness, but several recurring design tasks benefit disproportionately when **multiphysics lives inside the modeling loop**. In electronics cooling, adjusting baffles, sinks, and perforations while watching pressure drop and temperature plumes settle eliminates the old ping-pong between CAD and external solvers, steering the layout toward fewer hotspots and lower fan curves. For vents, valves, and aero surfaces, the stiffness–shape interplay is decisive: materials thicken, cutouts migrate, and seals compress, changing both the flow path and the structure’s load path; instant **fluid–structure interaction (FSI)** feedback turns hesitant trial-and-error into confident shaping. Thermo-mechanical warpage during DFM iterations for injection-molded parts is another category killer: small gate moves, rib tapering, or core/cavity steel swaps can be scored immediately against post-eject flatness and residual stress. In early architecture, façade massing responds to pedestrian wind comfort, urban canyons, and solar gains; seeing gust-driven recirculation and daily insolation overlays while pushing vertices changes how envelopes emerge. Finally, packaging and ergonomics hinge on dynamics with contact and compliance: hinge frictions, latch snaps, and soft interfaces need quick reads on clearances and force peaks as assemblies articulate. These applications share one property: the design variables are sitting in the modeler’s hands, and the multiphysics tradeoffs are local, numerous, and time-sensitive. Putting analysis in the same viewport lowers the marginal cost of exploration to nearly zero, which is where originality and safety margins grow together rather than compete.

- Fluid–thermal co-simulation: steer baffles, heat sinks, and perforations while tracking pressure drop and temperature hotspots as color fields and probe charts.

- FSI for vents/valves/aero: co-evolve geometry and stiffness; plot lift/drag and seal compression vs. edits with live residual bars.

- Thermo-mechanical warpage: gate/rib/mold strategy tweaks scored against predicted flatness deviation and stress anisotropy in seconds.

- Architectural wind and solar: early massing guided by comfort metrics, gust maps, and sun-hours overlays tied to façade sliders.

- Mechanism dynamics with contact: quick compliance-aware kinematics for packaging sweep, pinch forces, and wear hotspots.

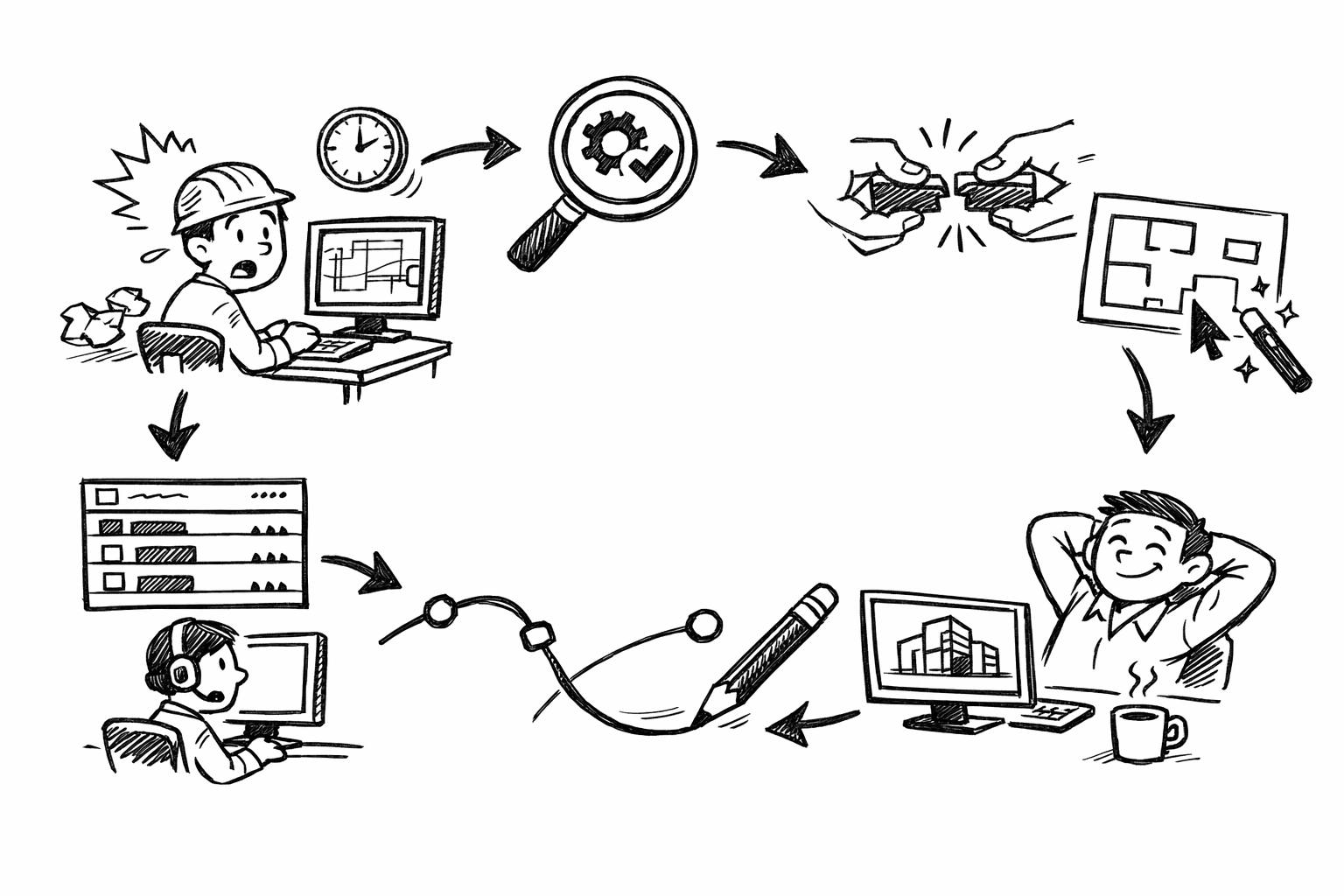

Why inside the CAD viewport

Placing multiphysics directly **inside the CAD viewport** turns analysis from a gatekeeper to a guide. Export–import rituals fracture associativity: parameters, feature histories, and semantic names become brittle payloads that often return with mismatches or are simply lost. Keeping the computation co-resident with the parametric model preserves feature-level intent, so when you move a datum or retag a face, both the geometry and its boundary conditions update atomically. This alignment changes behavior: instead of designing, exporting, waiting, and retrospectively validating, designers steer within a feedback-rich neighborhood of feasible options. The economic effect is stark: the marginal cost of one more idea approaches zero when the delta to see it is one drag and a half-second refresh. That dynamic encourages broader, earlier exploration, which is precisely when multi-physics tradeoffs are cheapest to reconcile. It also makes collaboration easier—annotations, tolerances, and PMI sit in the same session, so conversations share a single source of truth. Most importantly, co-location of geometry and fields simplifies governance and provenance: who changed what, which QoIs improved, and whether the result is audit-ready are tracked without brittle cross-tool stitching. The viewport becomes a multi-sensory instrument that plays fields, derivatives, and constraints together, elevating the dialog from “Is this okay?” to “How can we make this better right now?”

- Eliminates file friction and preserves **parameter/feature associativity** for stable iteration.

- Enables design-space steering instead of post hoc validation pipelines.

- Lowers exploration cost and surfaces multiphysics tradeoffs when they matter most.

Constraints and guardrails

Interactive multiphysics only earns a place on regulated or mission-critical programs if it delivers verification, validation, and auditability as first-class outputs, not afterthoughts. “Looks plausible” fails audits; evidence trails pass them. The system must support reference benchmarks, regression locks, and device-independent determinism where feasible, or at least clearly bounded nondeterminism with reproducibility tooling. It must scale to tens of millions of degrees of freedom without freezing the cursor, which means background work-stealing, priority queues, and graceful degradation under pressure. On the data side, **IP controls and data residency** are nonnegotiable: air-gapped operation, encrypted caches, and controllable retention policies keep export risks contained. The UX itself becomes a guardrail: explicit QoI tolerances, visible residuals, and uncertainty halos prevent overconfidence when the solver is in a reduced or preview mode. Lastly, cross-team collaboration should produce PLM-stamped artifacts—parameter sets, solver configs, and decision notes—automatically, so compliance isn’t a heroic act at the end. These constraints move the offering from demo-ware to dependable infrastructure while preserving the immediacy that makes it valuable.

- V&V hooks: on-demand benchmarks, regression snapshots, and provenance ledgers.

- Scalability: non-blocking scheduling, progressive refinement, and robust preconditioning to keep interaction fluid at large DOF counts.

- Security: on-prem/edge GPUs, strict **data residency**, encryption, and role-based visibility for sensitive programs.

System architecture: from parametric geometry to GPU solvers

Geometry-to-analysis pipeline

The shortest path from a parametric feature tree to a stable field solution starts by honoring the semantics you already authored. Features, BREP faces, and PMI tags are not just geometry—they are pre-labeled analysis regions and boundary conditions waiting to be mapped. A robust pipeline reads face names like “Inlet_A,” material assignments per body, and feature-level manufacturing notes, then binds them deterministically to analysis regions and loads. Speed begins with tessellation: curvature-aware sizing creates fine elements where principal curvature demands it and coarser zones elsewhere; boundary-layer extrusion on the GPU produces prismatic stacks that respect y-plus targets without stalling the UI. Where meshing would cost too much iteration time, alternatives step in. **Isogeometric analysis (IGA)** reuses NURBS surfaces directly, eliminating geometry–mesh gaps and delivering higher continuity—an asset for thin shells and contact. For rapid iteration in complex topologies, cut-cell and immersed boundary approaches avoid global conforming meshes; you pay a local stabilization tax but you get edits-to-solution latencies that keep thought-flow intact. The pipeline must be reversible: parameter edits trigger selective retessellation and BC migration, not full rebuilds. It also must be inspectable: a per-face/edge report of effective sizes, growth rates, and BC tags builds trust and helps users tune hot spots. In sum, geometry-to-analysis is a semantic wiring task accelerated by GPU tessellation, where the goal is not just mesh creation but preservation of design intent and rapid, deterministic updates.

- Direct mapping from BREP/materials/PMI to regions and BCs via persistent feature IDs.

- Curvature-aware tessellation and GPU boundary-layer extrusion for fast, stable near-wall resolution.

- IGA for smooth shells; immersed/cut-cell methods to dodge costly remeshes during frequent edits.

Solver stack and scheduling

A responsive multiphysics engine stands on a **matrix-free GPU solver stack** that feeds on the geometry pipeline without translation overhead. For structures and CFD alike, matrix-free FEM/FVM kernels avoid assembling large global matrices, reducing memory traffic and enabling higher arithmetic intensity—exactly what modern GPUs crave. These are stabilized by geometric or algebraic multigrid (AMG) preconditioners, with smoothers tuned per-physics; on GPUs, hybrid smoothers (e.g., Chebyshev + Jacobi) strike a balance between robustness and SIMD efficiency. Coupling strategies follow the physics: partitioned solvers remain attractive for reusing domain-specific kernels and scheduling them independently, while monolithic blocks win in stiff regimes; practical interactivity leans on partitioned schemes augmented by fixed-point accelerations like Aitken or Anderson to keep outer iterations short. The runtime should maintain persistent CUDA/Vulkan/Metal compute pipelines with command-graph reuse so that kernel dispatch overheads stay negligible, and the viewport shares the device through zero-copy interop (CUDA–GL/VK) to stream fields without serialization. A task graph with priorities ensures user input preempts background refinements; field updates propagate opportunistically when the pointer rests. The aim is a choreography, not a queue: each frame decides whether to extend a multigrid V-cycle, advance a coupling step, or deliver a visually consistent intermediate. This is how you honor the latency tiers while converging toward decision-grade answers.

- Matrix-free FEM/FVM with GPU-friendly preconditioners (geometric/AMG) for high throughput.

- Partitioned vs. monolithic coupling chosen per regime; fixed-point acceleration to cap outer iterations.

- Persistent compute pipelines and **asynchronous in-situ** rendering via CUDA–GL/VK interop.

Incremental updates and state reuse

Interactivity collapses if every tweak forces a cold start. The system must treat a new edit as a perturbation of a known state and exploit that structure ruthlessly. Mesh morphing keeps node adjacencies and sparsity intact under small shape edits, while selective local remeshing with topology-change detection limits churn to the affected neighborhood. Warm-starts project previous velocity, pressure, temperature, or displacement fields onto the updated discretization, landing the solver near the new attractor. Caching dynamic sparsity patterns and prolongation operators amortizes multigrid setup costs across edits. Mixed precision—FP16/TF32 for bulk smoothing with FP32/FP64 correction—drives frames faster under an iterative-refinement guarantee, defending accuracy while buying throughput. Determinism matters for trust and V&V: record the kernel graph, seed states, and reduction orders where feasible so that a run can be replayed across devices; when non-determinism is inevitable, track bounds and certify QoIs. Provenance metadata—geometry hash, BC map, solver version, precision modes—rides alongside the result so that a decision taken today can be audited later without forensics. In practical terms, **incremental state reuse** is the difference between waiting and thinking; it turns the solver into a conversational partner that remembers what you were doing a moment ago and helps you continue the sentence rather than starting a new one each time.

- Mesh morphing and local remesh to preserve sparsity and locality under small edits.

- Field projection for warm-starts; cached multigrid hierarchies and dynamic sparsity reuse.

- Mixed-precision solves with iterative refinement; device-spanning determinism/provenance tracking.

Scaling and deployment

Design sessions eventually touch models that dwarf a single GPU’s comfortable memory and bandwidth envelope. Multi-GPU domain decomposition with NVLink or RDMA allows strong scaling, but interactivity demands overlap: communication halos compress, streams hide latency, and coarse-grid corrections avoid becoming synchronization cliffs. Out-of-core strategies extend reach further by tiling meshes into bricks with prioritized streaming; tiles near the camera or active edit region get higher fidelity and residency, while distant tiles degrade gracefully. For massive assemblies, field-of-view-aware LODs and tiled brick storage keep navigation smooth even as background solves proceed. Deployment must adapt to reality: regulated sites need air-gapped, on-prem edge GPUs with hardened caches and predictable performance, while burst capacity to the cloud can accelerate “heavy variants” when policy allows; a latency-aware UX signals when updates will exceed interactivity budgets and invites the user to park edits or run a batch refinement. The orchestration layer understands both compute topology and user intent, assigning tasks to local or remote resources without breaking provenance chains. In this way, the same **architecture scales from laptops to clusters**, preserving the feel of immediacy while meeting enterprise security and throughput requirements.

- Multi-GPU with NVLink/RDMA, halo compression, and compute/comm overlap for smooth scaling.

- Out-of-core tiled bricks and LOD for massive assemblies without stutter.

- Edge/on-prem for air-gapped sites; cloud burst with explicit, **latency-aware UX** for heavy runs.

Numerics and UX patterns that make interactivity trustworthy

Discretizations matched to physics and “frame budget”

Interactivity is a budget; discretizations must be chosen to spend it wisely. For internal aerothermal flows where walls dominate and compressibility is modest, lattice Boltzmann methods (LBM) on GPUs deliver excellent memory locality and regularity, enabling frame-grade updates for qualitative steering while retaining enough quantitative bite for pressure drop and recirculation trends. When boundary complexity grows—porous interfaces, rotating frames, conjugate domains—a stabilized finite-volume method (FVM) with low-dissipation limiters provides robust handling of mixed BCs and non-orthogonal grids, albeit at a slightly higher per-iteration cost. Free-surface or particulate flows benefit from SPH or MPM for topological freedom, especially in early concepting. For structures, hex-dominant, matrix-free FEM with energy–momentum conserving time integrators preserves stability under large steps, a property that pays dividends when edits interrupt solves mid-step. Thermal and electro-thermal diffusion pair naturally with algebraic multigrid; Joule and convective coupling can be staged to deliver quick temperature equilibria that inform routing and layout before detailed EM fields are necessary. The key is composability: a “preview” mode may route flow via LBM and structure via a coarse hex FEM, while a “decision” mode switches to higher-order variants or tighter tolerances without altering the user’s workflow. These choices reflect a principle: discretize not only for asymptotic accuracy, but for the rhythms of human interaction and the GPU’s strengths.

- CFD: LBM for fast steering on internal flows; stabilized FVM for complex BCs; SPH/MPM for free surfaces.

- Structures: hex-dominant, matrix-free FEM with conservative integrators for stable, large steps.

- Thermal/electro-thermal: diffusion with AMG, staged Joule/convective coupling for quick layout insights.

Stability, coupling, and adaptivity

Responsiveness without stability breeds false confidence. Time-stepping must be CFL-aware with adaptive control to prevent overshoot when geometry tightens or materials stiffen mid-edit. Monotone fluxes and low-dissipation limiters protect against nonphysical oscillations in under-resolved previews, while embedded error estimates decide when local subcycling can hold a hotspot steady without stalling the global step. Coupling between fields benefits from staggered Gauss–Seidel sweeps with relaxation; where stiffness bites, quasi-Newton updates with residual recycling tame the outer loop without forming full Jacobians. Adaptivity is the secret engine of perceived intelligence: local h-/p-refinement follows feature edits and emerging gradients, and retracts where fields relax, keeping work proportional to need. Most importantly, the UX broadcasts solver confidence: uncertainty halos around streamlines, confidence bands on stress probes, and live residual bars communicate how close the current picture is to a stable answer. These signals are not garnish—they are operational safeguards that let users act within safe bounds during exploration while encouraging a one-click promotion to a higher-fidelity pass when needed. In short, **stability and adaptivity** convert raw performance into trustworthy interactivity.

- CFL-aware adaptive time steps and monotone fluxes to avoid nonphysical artifacts.

- Interface coupling via staggered Gauss–Seidel with relaxation; quasi-Newton residual recycling in stiff regimes.

- Automatic local h-/p-adaptivity and subcycling driven by error indicators tied to on-screen confidence cues.

Speed with guarantees

Designers deserve fast answers with explicit escape hatches to truth. Hyper-reduced models—DEIM for nonlinear terms or global nonlinear least-squares (gnNLS) projections—generate live previews that respect conservation and dominant modes at a fraction of cost. When the pointer rests, the solver can opportunistically climb back to full order, reconciling any drift. Online-trained surrogates and physics-informed neural networks (PINNs) can join the party, but only if they are gated by **residual and energy checks**; when they fail, the system must auto-fallback to ground-truth numerics without drama. Real-time adjoints supply sensitivities that power gradient overlays, slider what-ifs, and design-space heatmaps—features that turn color maps into decisions. Guarantees emerge from contracts: each mode declares QoI tolerances (e.g., pressure drop within 5%, max von Mises within 8%) and shows a pass/fail badge next to the field; promotion to stricter modes is one click. Iterative refinement in mixed precision locks in correctness while the GPU enjoys lower-bit throughput. The result is momentum without myths: speed when you explore, rigor when you commit, and a continuous path between them.

- Hyper-reduced previews (DEIM/gnNLS) that elevate to full-order solves on idle.

- Surrogates/PINNs admitted only under residual/energy gates with automatic fallbacks.

- Real-time adjoints for sensitivities, fueling gradient overlays and interactive “what-ifs.”

Viewport and workflow design

Interactivity is ultimately judged in the viewport where hands, eyes, and equations meet. Accuracy dials—draft, preview, decision—must advertise explicit QoI tolerances and current residuals, not just vague labels. Overlays should layer streamlines, isotherms, and stress/strain fields with **uncertainty halos**, while probe widgets report values plus confidence intervals. Constraint warnings (factor of safety dips, ΔT spikes, pressure margin breaches) appear as live callouts that can auto-pause solvers on instability, preserving the last trustworthy state. Designers should be able to pin comparisons—before/after edits, variant A/B—while the system reuses states to make toggling instantaneous. Workflow affordances complete the loop: parametric sliders bind to adjoint-backed gradients, sketch dimensions update QoIs in-line, and a small HUD shows the latency tier currently in play. V&V is built in: a benchmark gallery can run in-session to validate kernels post-update; regression snapshots capture geometry, meshes, solver settings, and hashes into PLM-stamped bundles. None of this is spectacle—it is scaffolding that lets teams make decisions they can defend. When **UX communicates accuracy, not just color**, multiphysics becomes a daily tool, not a special occasion.

- Mode dials with explicit QoI tolerances and residuals; visible uncertainty cues on overlays.

- Live constraint warnings with auto-pause to protect trust boundaries.

- Built-in V&V: on-demand benchmarks, regression bundles, and PLM provenance baked into saves.

Conclusion

From idea to validated decision in one sitting

When GPU multiphysics runs inside CAD, the loop from notion to decision compresses to the space of a conversation. Edits stop being IO events and become hypotheses; fields stop being reports and become feedback. The winning stack fuses direct geometry-to-analysis mappings that respect feature semantics, **matrix-free GPU solvers with robust preconditioning**, and incremental state reuse so each interaction builds on the last. UX ceases to be an afterthought and becomes the carrier of guarantees—residuals, tolerances, and uncertainty move from the appendix to the header. This changes organizational tempo: concept reviews incorporate quantified risk and opportunity in the same session, and iteration budgets shift from days to minutes. Trust grows because provenance is automatic and reproducible, not hand-assembled. The cultural shift is perhaps the most important: simulation graduates from a gating ceremony to a design instrument, an always-on companion that rewards curiosity with clarity. Teams spend their time deciding, not waiting, because the system tells them—plainly—what is known, how well it is known, and what one more click will buy. In that environment, creativity and safety margins reinforce each other: broader searches find better compromises sooner, and guardrails keep enthusiasm inside verified bounds.

- Design becomes steering: hypotheses tested at interaction speed with field-aware feedback.

- Provenance and V&V stay attached to the model, enabling auditable decisions on the spot.

- Latency tiers, state reuse, and accurate overlays preserve both flow and rigor.

Critical investments and the north star

The road ahead is less about raw FLOPS and more about standardization, openness, and trustworthy acceleration. First, standardized semantic links between CAD features, BCs, and solver inputs must mature—think STEP/AP extensions that carry analysis intent and IGA-ready descriptors so geometries remain faithful across the pipeline. Second, open, device-agnostic solver backends with reproducibility tooling can decouple physics kernels from hardware churn, easing certification and long-term maintenance; deterministic modes and variance-bounded reductions should be first-class features, not hidden flags. Third, interactive adjoints and error-bounded surrogates are the lever arms that scale exploration without sacrificing trust: they turn gradients into guidance and approximations into accountable speedups. Security and data residency remain evergreen: edge/on-prem by default with encrypted state and explicit, user-visible cloud bursts when policy permits. The **north star** is simple to say and demanding to meet: results you can sign off on during the design session—auditable, performant, and secure. When the stack reaches that bar, the distinction between “CAD time” and “simulation time” dissolves. There is only design time, and every second advances both shape and certainty. That is how teams stop negotiating with their tools and start conversing with their products while they are still malleable.

- Standard semantic mappings (STEP/AP, IGA-ready) to keep intent intact from feature to field.

- Open, portable solver backends with reproducibility baked-in for certification-grade runs.

- Interactive adjoints and error-bounded surrogates to amplify exploration responsibly.

- Security-first deployment that preserves **data residency** while enabling elastic throughput.

Also in Design News

AutoCAD LT Productivity Audit: 5 Underused Features That Eliminate Micro-Friction in Daily Drafting

March 10, 2026 9 min read

Read MoreSubscribe

Sign up to get the latest on sales, new releases and more …