Your Cart is Empty

Customer Testimonials

-

"Great customer service. The folks at Novedge were super helpful in navigating a somewhat complicated order including software upgrades and serial numbers in various stages of inactivity. They were friendly and helpful throughout the process.."

Ruben Ruckmark

"Quick & very helpful. We have been using Novedge for years and are very happy with their quick service when we need to make a purchase and excellent support resolving any issues."

Will Woodson

"Scott is the best. He reminds me about subscriptions dates, guides me in the correct direction for updates. He always responds promptly to me. He is literally the reason I continue to work with Novedge and will do so in the future."

Edward Mchugh

"Calvin Lok is “the man”. After my purchase of Sketchup 2021, he called me and provided step-by-step instructions to ease me through difficulties I was having with the setup of my new software."

Mike Borzage

Design Software History: From Repeatable Drawings to Executable Specifications: A History of CAD, MBD, and PMI

March 03, 2026 11 min read

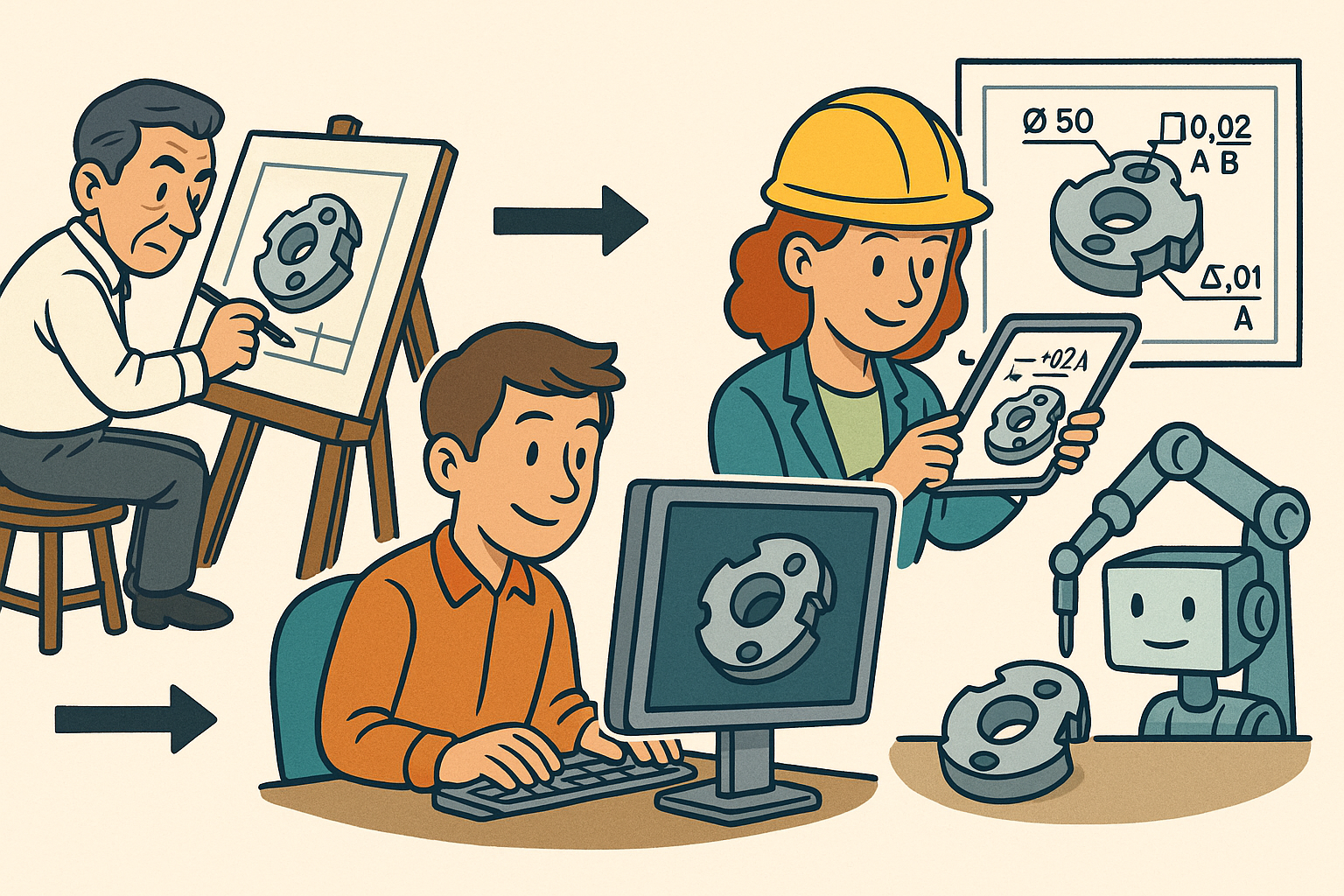

Origins and early automation of notes and dimensions (manual to 2D CAD)

Drafting culture and standards established the baseline

Before computers touched the drawing board, industrial documentation was governed by a disciplined culture of lettering, layout, and conventions that ensured legibility across factories and continents. Title blocks were not decorative; they encoded the provenance of a design, its change history, materials, and inspection authorities. Shop-floor clarity depended on consistent notation of datums, finishes, and tolerances guided by company drafting manuals that transposed national and international norms into house style. Above all, ASME Y14.5 codified geometric dimensioning and tolerancing so that intent survived reproduction, scaling, and interpretation by different stakeholders. Veteran checkers policed that culture, wielding red pencils to enforce symmetry between what a designer meant and what a vendor would machine. In this world, “intelligence” lived in human practice and printed pages; any automation had to begin by mirroring those disciplined habits.

When early 2D CAD arrived, it walked into a universe already organized by standards and expectations. The draftsman’s kit—T-squares, triangles, French curves—translated into digital line, arc, and spline commands. Yet the central currency stayed the same: notes, leaders, and dimensions communicated intent. The earliest CAD installations were judged by whether plotted drawings matched the house style and whether checkers could still navigate a print as confidently as vellum. That is why the first wave of digitization focused less on “intelligence” and more on reproducibility: if the title block, general notes, and tolerances looked right every time, trust in the screen would follow. The baseline was therefore cultural and standard-driven long before it was algorithmic—an essential precondition for every automation that followed.

From digitizers to drafting terminals: 2D CAD captures notation, not intent

By the early 1980s, software like Autodesk’s AutoCAD (1982), Boeing-born CADAM (later IBM/Dassault), Computervision CADDS, and Intergraph systems brought drawings to raster displays and pen plotters. They digitized drafting but left cognition to the operator. Dimensions were geometry paired with text; leaders were polylines; and notes were dumb strings. Associativity—if it existed at all—was fragile, often limited to dimension objects remembering which endpoints they spanned. When a hole moved, the user was expected to re-stretch leaders, update numerical values, and reflow text. The machine simply preserved pixels and vectors; the humans preserved meaning.

Still, powerful conventions took shape that seeded automation. The “block” metaphor let teams encapsulate symbols and title blocks into reusable cells. Layers substituted for pencil grades and overlay sheets, separating geometry, annotations, centerlines, and construction aids. Plotting pipelines matured as pen-plotter setup files mapped colors to pen widths and drafters standardized text styles to match blueprint legibility. Even in this non-associative world, teams engineered predictability. If a shop trusted that the 0.35 mm pen corresponded to layer COLOR 2 for visible edges and that every title block attribute aligned with a property list, they were already halfway to programmable documentation—even if the documentation lived in a DWG, IGDS, or CADDS file rather than a binder.

Repeatability breakthroughs: blocks, attributes, and plotting discipline

Repeatability is the quiet power that turned 2D CAD into a documentation machine. Block libraries operationalized standards: the same weld symbol, surface finish triangle, or revision triangle appeared everywhere with identical proportions. Critically, attribute tags embedded in blocks made fields like part number, revision, drawn-by, and material machine-addressable. AutoCAD’s ATTDEF/ATTEDIT paired with INSERT meant a title block could be both a graphic and a structured container. Intergraph and CADDS users saw similar advantages in cell libraries and parameterized symbols. As printers replaced pen plotters, plot style tables (color-dependent CTB and style-dependent STB) preserved the mapping of lineweights and colors; device profiles and paper sizes standardized output across offices.

Organizations exploited these affordances to reduce variance and speed release:

- Template drawings with preloaded layers/linetypes and viewports ensured consistent scales, view arrangements, and text heights.

- Standardized text styles (fonts, heights, widths) aligned with readability and ASME legibility guidance, minimizing checker objections.

- Plot configurations and pen mappings locked down output so a drawing looked the same from Boston to Bangalore.

- Title-block attributes primed future PDM hooks, enabling scripts to synchronize metadata with nascent databases.

These were not yet “smart drawings,” but they were repeatable drawings. Once the contents of a title block could be updated en masse and a notes block could be swapped across hundreds of sheets without manual redrafting, the door to real automation opened. The shop still read a plotted sheet, but the office began to read and write the sheet through scripts.

Scripting and early PDM: from macros to metadata pipelines

The first wave of intelligence arrived as scripting and macros. AutoCAD’s AutoLISP evolved from a convenience into an automation framework; VBA and later ObjectARX/ActiveX exposed entities and attributes programmatically. Unigraphics introduced GRIP and UG/Open so power users could batch-create notes, propagate dimension styles, and assemble parts lists from geometry and text. CATIA V4 offered macro recording and CAA customization that let OEMs pack company-specific symbols and callouts behind menu items. Common shop-floor chores—hole callouts keyed to drill charts, material notes keyed to stock codes, and revision stamps that pulled user/time data—graduated from repetitive handwork to one-click routines.

At the same time, proto-PDM hooks emerged. CAD managers wired title-block attributes to external databases, first through CSV exports and later through ODBC/SQL links. In AutoCAD, attribute extraction and data links meant fields like part number, ECO, and material could be round-tripped between drawings and bill-of-materials systems. Intergraph and Computervision sites used bespoke scripts to tag drawings with project codes, effectivity, and release status. The result was a foreshadowing of model-driven notes: while 2D entities remained dumb, the metadata tied to them began to live in databases. That metadata would soon find a richer host—the 3D model itself—where associativity could be enforced by geometry rather than by policy.

Parametrics, associativity, and drawing generation from 3D models

Pro/ENGINEER makes geometry and annotations co-evolve

In 1988, Samuel Geisberg and the team at PTC launched Pro/ENGINEER, fusing feature-based, parametric solid modeling with a drafting system that treated drawings as dependent views of a master model. This was the watershed for annotations: dimensions on the drawing could be driven or driving and, crucially, they were associative to the model’s features. A change in the model’s sketch dimension propagated to the drawing, and edits to a shown dimension (within rules) drove the model. Automatic hidden-line removal and standards-compliant projection views materialized without redrafting. Balloons and BOMs read assembly structure directly; renumbering parts updated balloons and tables consistently. For the first time, “intelligence” migrated from text objects to the parametric skeleton of a product.

Pro/ENGINEER also professionalized template-driven documentation. Model items—dimensions, GD&T symbols tied to features, and notes—could be “shown” in drawings rather than redrawn, preserving a single source of definition. Families of parts using family tables shared drawing formats and views, turning the drafting room into a publishing pipeline. The division of labor shifted: designers encoded intent in sketches, features, and relations; drawing creators curated views, added presentation-only notes, and verified that design intent communicated clearly. The implication was radical but simple: the 3D model became the reference; the drawing was a graphically faithful derivative.

CATIA V5, Unigraphics/NX, and SolidWorks mainstream the single source

As the 1990s closed, Dassault Systèmes with CATIA V5 (shepherded strategically by Francis Bernard and later Bernard Charlès), UGS with Unigraphics/NX (under leaders such as Tony Affuso and Chuck Grindstaff), and SolidWorks (founded by Jon Hirschtick) carried associativity into the mainstream. The pattern repeated: drawings were generated from models whose features embodied intent. Automated drafting matured through:

- View-creation rules that auto-placed standard orthographic, section, and detail views with correct scales.

- Reuse of model items so tolerances, patterns, and dimensions authored once traveled to drawings without duplication.

- Feature-driven callouts: hole and thread notes pulled from Hole Wizard (SolidWorks) or feature parameters (CATIA/NX/Creo) for consistent wording.

- Template-based notes and design tables (Excel-driven in SolidWorks, expressions in NX, relations/family tables in Pro/E) that standardized language across configurations and variants.

Managers seized on the productivity and quality gains. Checkers could focus on content rather than arithmetic correctness; if a dimension value derived from the model, transcriptions errors vanished. BOMs became live views of assemblies, deeply tied to part properties. Even multi-sheet drawings benefited, as title-block fields updated from referenced model properties. With the single source of truth now the solid model, drawings served their audience—suppliers, inspectors, technicians—without becoming a semantic fork of the design.

Early GD&T helpers and PLM-populated metadata reduce clerical work

As geometric modeling matured, vendors layered GD&T support into drafting. Symbol palettes ensured standard-compliant frames; partial semantic checks flagged impossible or incomplete tolerances. Third-party analysis, especially Sigmetrix CETOL 6σ, pressured MCAD vendors to keep symbols and datum strategies computable. PTC, Siemens, and Dassault integrated advisors—later formalized as GD&T Advisor by Sigmetrix—to guide creation of syntactically sound callouts. While still 2D-centric, the drafting environment became a front end to tolerance analysis and stack-up simulation, closing a loop that once required rekeying tolerances into analysis tools.

At the same time, PDM evolved into enterprise PLM. PTC Windchill, Siemens Teamcenter, and Dassault ENOVIA (including SmarTeam) programmatically populated title blocks, revision tables, and release stamps. Metadata governance moved from macros to workflows: upon change approval, systems wrote effectivity, approvers, and ECO identifiers into drawing fields. The mechanics varied—embedded parameters in Creo, attributes in NX, text fields in CATIA—but the philosophy unified: humans authored design intent; systems propagated context and control. This alignment eliminated error-prone clerical tasks and made documentation reflect the authoritative state of the product record. It also prepared organizations to leap from 2D presentation to 3D semantic annotation, where the annotations themselves would live natively with the geometry rather than on derived sheets.

Model-Based Definition and PMI: standardization, tooling, and extraction

Standards make annotations machine-readable across ecosystems

Model-Based Definition (MBD) reframes documentation: the 3D model, enriched with Product and Manufacturing Information (PMI), becomes the authority while 2D drawings, if produced, are visual derivatives. This leap required standards that carried annotations as data, not just pictures. ASME Y14.41 (2003 and updates) and ISO 16792 defined digital product definition practices—how to structure 3D views, annotation planes, and display states so humans and software could agree on what was being specified. In parallel, ASME Y14.5 evolved (1994/2009/2018) to tighten semantics for digital consumption, clarifying datum feature references, pattern-of-features, and profile controls so a tolerance frame could be parsed and reasoned about by software.

For interoperability, STEP AP242 emerged as the flagship: it consolidated AP203/214 lineages and added semantic PMI with feature and presentation associations. Siemens’ JT format advanced lightweight viewing with PMI, and the QIF standard from DMSC provided a schema for product definition, measurement plans, results, and statistics—crucial for closing the quality loop. For accessibility, 3D PDF packages using PRC/U3D broadened the reach of MBD to suppliers without native CAD. The center of gravity shifted from layers and text styles to ontologies and schemas; the critical question became not “Can I see the callout?” but “Can my downstream system understand the callout without retyping?” These standards answered in the affirmative and made the digital thread realistic rather than rhetorical.

Native PMI authoring and validation tools professionalize MBD

Major MCAD platforms infused their cores with PMI authoring. CATIA FTA (Functional Tolerancing & Annotation), Siemens NX PMI, PTC Creo MBD, SolidWorks MBD, and Autodesk’s Inventor/Fusion MBD offerings let users attach semantic tolerances, surface finish, weld symbols, notes, and view states to faces, edges, and features. The key is that PMI is not painted on; it is associated to geometric items such that changes to features update annotations. Users curate “combination states” to present intent clearly: an assembly datum scheme in one view, machining tolerances in another, inspection-centric views for CMM programming.

A parallel ecosystem of validation and translation vendors emerged to guarantee integrity across system boundaries. Elysium (ASFALIS), ITI CADIQ, Capvidia, PROSTEP, and Anark built checkers that verify semantic equivalence between native and neutral representations: are GD&T frames maintained? Are associations preserved? Are presentation states correct? These tools also produce robust 3D PDFs and viewer packages with traceable validation reports. The effect is industrial discipline: a release package is no longer just visuals; it is a data contract whose conformance can be tested automatically. That assurance has been essential to win over suppliers, auditors, and quality engineers who must trust that what they see matches what the model encodes.

From PMI to automation: CAM, CMM, and closed-loop quality

Once PMI is semantic, downstream tools become consumers rather than typists. CAM systems can read tolerances, datums, and feature definitions to propose machining strategies and select operations with guardrails. Inspection planning benefits even more dramatically. Using STEP AP242 or QIF-encoded models, tools such as Hexagon PC-DMIS and Zeiss CALYPSO can auto-suggest probing paths, select alignment strategies consistent with the datum scheme, and generate measurement characteristics aligned to GD&T callouts.

Rule-based engines map feature/control frames to inspection actions:

- Position tolerances on hole patterns yield multi-point probing with statistical sampling per pattern rules.

- Profile controls on sculpted surfaces trigger dense scanning or structured-light capture with defined point densities.

- Flatness/parallelism on prismatic faces map to three-point or grid sampling depending on feature size and risk level.

The payoff compounds when results flow back through QIF Results to SPC systems like q-DAS or InfinityQS. Nonconformances inform process adjustments; risk-weighted tolerances can shift sampling strategies midstream. Instead of a drawing being a dead-end deliverable, the model becomes executable: it instructs machines how to cut and measure, and it informs systems how to learn. None of this is possible without unambiguous, structured PMI—exactly what the standards and MBD authoring tools were built to provide.

Industrialization and mandates meet real-world constraints

Aerospace and defense led the charge. Boeing and Airbus drove supply chains toward MBE (Model-Based Enterprise), aligning with guidance such as MIL-STD-31000 where the model with semantic PMI is the authority and drawings become optional, presentation-focused derivatives. Mandates accelerated investment: Tier-1 and Tier-2 suppliers adopted viewers, translators, and validation to ensure fidelity. Procurement, quality, and manufacturing systems were wired to expect data, not retyped notes. The upside was enormous: fewer interpretation errors, faster first-article inspections, and a clearer audit trail from requirement to measurement.

Yet challenges remain that practitioners confront daily:

- Graphical vs. semantic PMI parity: visual richness in native CAD can outpace what neutral formats encode; teams must police gaps.

- Cross-vendor fidelity: subtle differences in datum target semantics or pattern definitions can creep in during translation.

- Organizational change: checkers, machinists, and inspectors need role-specific training to read 3D intent effectively.

- Supplier readiness: not every shop has CMMs or viewers tuned for semantic PMI; phased adoption and dual deliverables may be necessary.

- Human readability in 3D: clarity demands disciplined view states, filters, and simplified representations to prevent annotation soup.

Industrialization has thus been a balancing act: push the model to be the single source while supporting a pragmatic ramp that respects real constraints. The destination is clear and increasingly standardized; the route, however, is as much about governance and training as it is about file formats and features.

Conclusion

From repeatable drawings to executable specifications

The trajectory of engineering documentation runs in a straight, if hard-won, line. Manual drafting culture and ASME Y14.5 gave us a shared language; early 2D CAD replicated that language with better repeatability through blocks, layers, text styles, and plotting discipline. Parametrics, led by Pro/ENGINEER and generalized by CATIA V5, Unigraphics/NX, and SolidWorks, moved intent into the model and made drawings associative derivatives. The present era pushes further: Model-Based Definition with semantic PMI turns documentation into computable data that travels across PLM, CAM, CMM, and SPC without retyping. Standards such as ASME Y14.41, ISO 16792, STEP AP242, JT, and QIF—combined with platform capabilities from PTC, Siemens, Dassault, Autodesk, and their validation partners—made this evolution durable.

The hinge is extraction: once annotations are reliable and semantic, downstream tools stop being stenographers and start being collaborators. CAM derives strategies that honor tolerances; CMM planning reflects datum logic; quality analytics closes the loop with measured evidence. The result is an end-to-end digital thread where documentation is not a terminal artifact but an active participant in design, manufacture, and verification. We have moved from neat drawings to executable specifications—and that changes who does what work, where errors can hide, and how fast organizations can improve.

What’s next: conformance, AI assistance, and pervasive consumption

Three frontiers will define the next decade. First, tighter conformance testing will shrink gaps between native and neutral semantics: certification suites for PMI akin to geometric kernel benchmarks will let vendors prove fidelity and let enterprises trust translations by default. Second, AI-assisted PMI authoring and checks will act like copilots: suggesting optimal datum schemes, flagging ambiguous callouts, aligning tolerances to manufacturing capability, and auto-generating inspection characteristics with risk-aware sampling. This is not about replacing expertise; it is about amplifying it and catching inconsistencies before release. Third, live PLM governance will turn documentation rules into executable policies: change objects that fail annotation coverage, intolerance to undefined surfaces, or nonconforming weld symbols will be blocked at workflow gates, just as missing part properties are today.

On the consumption side, expect ubiquity. Lightweight viewers with verified STEP AP242, JT, and QIF support will be as common on the shop floor as PDFs once were, and suppliers will treat semantic PMI as table stakes. Integration patterns will harden so procurement, costing, and even automated quoting engines can read tolerances and finishes to propose price and lead-time with less human mediation. When this matures, documentation’s role shifts once more: from a deliverable that describes what to make, to a machine-readable contract that coordinates how it is made, measured, and improved. That destination fulfills the promise glimpsed in the first block attributes and macros decades ago: encode intent once, reuse it everywhere, and let the system carry the clerical burden so experts can focus on engineering.

Also in Design News

Augmented Reality Verification: Closing the Last-Inch Between Digital Models and Field Execution

March 03, 2026 13 min read

Read MoreSubscribe

Sign up to get the latest on sales, new releases and more …