Your Cart is Empty

Customer Testimonials

-

"Great customer service. The folks at Novedge were super helpful in navigating a somewhat complicated order including software upgrades and serial numbers in various stages of inactivity. They were friendly and helpful throughout the process.."

Ruben Ruckmark

"Quick & very helpful. We have been using Novedge for years and are very happy with their quick service when we need to make a purchase and excellent support resolving any issues."

Will Woodson

"Scott is the best. He reminds me about subscriptions dates, guides me in the correct direction for updates. He always responds promptly to me. He is literally the reason I continue to work with Novedge and will do so in the future."

Edward Mchugh

"Calvin Lok is “the man”. After my purchase of Sketchup 2021, he called me and provided step-by-step instructions to ease me through difficulties I was having with the setup of my new software."

Mike Borzage

Design Software History: From PRONTO to ANVIL: Hanratty, Portability, and the Commercial Architecture of CAD/CAM

April 14, 2026 13 min read

Why this story matters

Re-centering the narrative on manufacturing, NC, and portability

Strip away the dazzling raster demos and workstation showroom theatrics, and the heart of computer-aided design is deeply machinist: numerical control (NC), process planning, and the unglamorous discipline of getting toolpaths and drawings to talk to shop-floor machines without drama. The most formative decades of CAD/CAM were animated by this reality. Graphics were an enabler, but the gravitational pull came from cutters, feed rates, fixture offsets, and the downstream contracts that demanded repeatable parts. Early pioneers figured out that the true bottleneck was less the act of drawing a spline on a screen than expressing manufacturing intent in software that could survive the hostile diversity of machine tools, controllers, and minicomputers. Portability—once a dull-sounding property—was a strategic weapon. Code that compiled on DEC, Prime, Data General, HP, and IBM iron could move with customers as their procurement cycles, vendors, and budgets shifted. That same portability had a second-order effect: it encouraged componentization and licensing, because code untethered from any single machine was, by nature, more tradeable and integrable.

When we re-center the origin story here, we see how early NC languages such as APT and PRONTO didn’t just automate machining; they normalized the idea that geometry and manufacturing were two sides of the same coin. In that light, the field’s decisive moves were architectural: portable languages (notably Fortran), stable data abstractions, and interfaces that respected the messy realities of production. The icons of interactive graphics mattered, but the quiet triumphs were often in compilers, I/O, and neutral formats that made shop communication robust.

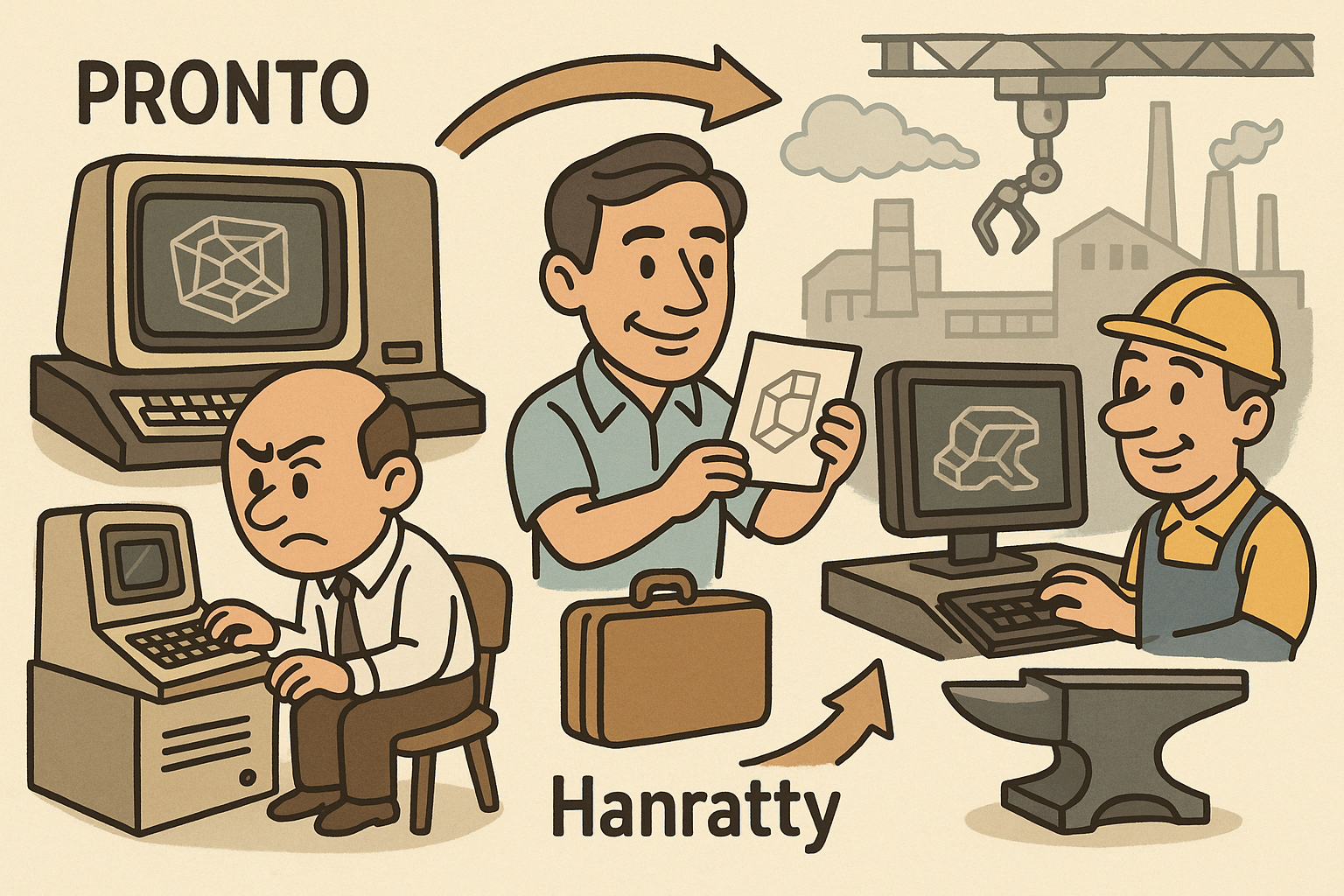

Hanratty as the connective thread linking NC, minicomputers, and vendors

Patrick J. Hanratty sits at the junction of these currents. Credited with writing PRONTO at General Electric in 1957—often cited as the first commercial NC programming language—he absorbed early that toolpath logic and geometric description could be expressed together. His work ran in parallel with the USAF/MIT APT project led by Douglas T. Ross, and the cross-current between PRONTO’s pragmatism and APT’s formalism seeded a career-long obsession: CAD had to be CAM-aware from the start. Decades later, when minicomputers democratized compute, Hanratty’s insight turned operational. He founded MCS (Manufacturing and Consulting Services) in 1971 with a deliberate break from earlier mistakes: code first, hardware-agnostic, engineering-minded, and licensable to anyone serious about building a system. This was not the swagger of a monolithic package; it was the patience of an ecosystem builder who understood that vendors rise and fall, but a portable substrate can outlive them. In effect, he created a clearinghouse where geometry, drafting, and NC were not siloed modules but a common codebase that licensees could tailor to their markets. That posture linked aerospace needs, job-shop pragmatics, and the ambitions of emerging CAD houses, making Hanratty less a lone inventor and more an industry catalyst whose code quietly ran behind other firms’ names.

By the time interactive graphics matured on workstations, much of the heavy lifting—coordinate systems, cutter compensation, curve libraries, drafting conventions—was already available as licensable building blocks. This made it possible for multiple vendors to accelerate simultaneously, propelling a multi-vendor marketplace rather than a single conqueror.

An ecosystem thesis: portable code, licensable components, cross-pollinating teams

History tends to crown singular breakthroughs, but CAD/CAM flourished because the pieces could travel: code, people, and ideas hopped between companies and machines with relatively little friction. The most enduring advances—the kind that won procurement cycles and survived recessions—were portable, vendor-neutral, and built for licensing. MCS’s ADAM codebase functioned as a substrate that fed multiple commercial lines; Shape Data’s boundary-representation research became portable kernels (ROMULUS, later Parasolid); APT made manufacturing intent expressible; and graphics firms like Evans & Sutherland lifted the floor for interactive expectations across the board. Just as important were the “little-knowns”—researchers who formalized solids (Herbert Voelcker and Aristides Requicha), architectural computing visionaries (Charles Eastman), and shop-floor pragmatists (Joseph Gerber) who made output devices reliable. Together, they made workflows trustworthy end to end.

Put bluntly, CAD’s overlooked lesson is that commercialization architecture is itself an invention. The decision to code in Fortran for minicomputer portability, to modularize geometry into licensable kernels, to adopt neutral exchange (from IGES to later STEP), and to align UI metaphors with drafting conventions all multiplied the impact of any given algorithm. The result was a fertile vendor ecosystem where improvement in one layer—curves, data exchange, kernels, framebuffers—could propagate rapidly. That is why the story still matters today: it explains why some tools endure across decades and why standards and kernels quietly shape product pipelines long after original brands have changed hands.

Patrick J. Hanratty’s through-line: from PRONTO to ADAM (and ANVIL)

Early NC foundations: PRONTO beside APT

In 1957, working at General Electric, Patrick J. Hanratty authored PRONTO, widely credited as the first commercial NC language dedicated to automating machine tool instructions. PRONTO’s character was pragmatic: instead of theory-first abstractions, it captured the shop’s operational grammar—feeds, speeds, coordinates—while keeping the syntax graspable to skilled machinists transitioning from punched cards and manual programming. Running in parallel, the USAF-sponsored APT (Automatically Programmed Tools) project at MIT, under Douglas T. Ross and collaborators, aimed to define a high-level language that expressed manufacturing intent through geometric constructs and cutter motions. APT introduced powerful semantics (like DRIVE, CHECK, PART surfaces) that formalized how code could represent not just points and vectors but production-ready decisions.

The two streams—PRONTO’s commercialization and APT’s standardization—had a profound combined effect. First, they validated that programs could safely pilot expensive machinery without human interpolation between drawing and controller. Second, they quietly made geometry the lingua franca of the factory. Even when displays were primitive, the notion that a “model” existed in software had already taken hold, because the NC tape originated from a mathematical description of part and process. This is the root of the CAD–CAM hyphen: a recognition that design data gains its full value only when it pragmatically flows to manufacturing without reinvention downstream. Hanratty’s later insistence that drafting, geometry, and toolpathing belong in one coherent codebase grows directly out of this early NC worldview.

Lessons from a false start and the portability pivot

Before MCS, Hanratty experienced a painful but pivotal lesson. An earlier venture—often referenced as Integrated Computer Systems (ICS)—tied its software too tightly to a proprietary platform and operating system. When the hardware vendor’s star dimmed, the software’s market narrowed with it. The episode was instructive in a way that textbooks rarely are: it revealed that in a fragmented minicomputer landscape, portability is survival. In 1971 he formed MCS with a Fortran-first, machine-independent philosophy. Fortran was not glamorous, but it compiled on the workhorses of the day (DEC, HP, Data General, IBM) and let code move where customers needed to run it. That portability mandate shaped everything from data structures to user I/O. Rather than hardwiring device specifics, MCS brokered clean interfaces, allowing licensees to bolt on their preferred graphics terminals, digitizers, and plotters.

This pivot had immediate commercial implications. Portable code could be licensed broadly, amortizing R&D costs and attracting feedback from diverse industries—automotive tooling, aerospace, general machinery—each bringing corner cases that hardened the software. It also enabled a virtuous talent cycle: engineers and mathematicians circulated between licensees and MCS, cross-pollinating implementation practices and turning the “Hanratty style” of simple, debuggable, and consistent geometry routines into a de facto craft standard. When later vendors bet on workstation GUIs and higher-order surfaces, they often did so atop the comfort that an existing portable core of drafting and NC logic was available and proven.

ADAM: the licensable CAD/CAM substrate

MCS’s ADAM (Automated Drafting And Machining) embodied the portability credo. Rather than split drafting from CAM, ADAM interwove them in one codebase: linework and dimensioning routines lived alongside curve libraries, offsetting algorithms, and toolpath generators. The effect was to keep geometrical and manufacturing semantics in sync—no lossy handoffs between packages, fewer reinventions of coordinate frames, and a consistent treatment of tolerances. ADAM targeted minicomputers and was engineered to compile across manufacturers, turning portability into a commercial differentiator. That stance invited OEM agreements and licenses: emerging CAD/CAM vendors and even some minicomputer makers took ADAM as a starting point, adding device drivers, UI layers, and domain-specific modules.

Among the better-known outcomes was its influence on the early trajectory of United Computing, whose UNIAPT bridged APT-style programming with emerging interactive graphics and whose Unigraphics lineage would become a durable industrial platform. Many industry veterans have noted how ADAM-derived code and concepts seeded multiple commercial systems during the 1970s and early 1980s, creating an underlayer of common practice in drafting, geometry, and NC. The details varied—some licensees took substantial portions; others learned from interfaces and algorithms and rewrote—but the architectural pattern repeated: a shared substrate enabling faster productization. This also encouraged the notion of licensable components more broadly. If drafting/NC could be a productized foundation, then kernels, translators, and visualization engines could, too. In that sense, ADAM foreshadowed the later market for geometry kernels (ROMULUS, Parasolid, ACIS) and neutral file translators that defined much of the 3D CAD economy.

Productizing the stack: ANVIL’s path to end-user adoption

MCS evolved ADAM into ANVIL—ultimately ANVIL-4000—as a complete end-user offering spanning drafting, NC programming, and, over time, richer surface and solid options. The name signaled intent: this was a tool for making, not just modeling. ANVIL gained a reputation for practical completeness: dimensioning that respected standards, toolpaths that matched shop-floor needs, and geometry libraries that absorbed then-modern curve/surface representations. As workstation graphics improved, ANVIL incorporated more interactive capabilities without losing sight of postprocessors, machine definitions, and process planning needed to turn screen geometry into chips and parts. Across shops, the appeal was that a single system could sketch a part, detail it, plan its milling and turning, and drive the controllers—without brittle exchanges. That durability is why Hanratty was dubbed by many the “father of CAD/CAM”: not merely for early invention but for enabling an ecosystem—a layer others could build on.

ANVIL’s influence also traveled through people. Engineers trained on ANVIL-era workflows carried expectations—portable file exchange, robust dimensioning semantics, and integrated NC—into the companies they later joined. In an industry where talent networks are as important as code, those expectations nudged other vendors to prioritize end-to-end consistency. The broader lesson was unmistakable: productizing the stack to include drafting, geometry, and manufacturing was not bloat; it was acknowledging that the value of CAD is realized only when it cleanly lands on the shop floor. Features that seem “supporting”—postprocessors, machine libraries, tolerance schemas—are, in manufacturing terms, the main event.

The little-known pioneers (and how their work intertwined)

APT, PRONTO, and the birth of intent-driven geometry

Douglas T. Ross’s work at MIT on APT, under USAF sponsorship, reframed programming for manufacturing. APT introduced a vocabulary where geometry, tool motion, and part semantics were first-class concepts. Its power lay in declaring surfaces and cutter behavior relative to those surfaces, paving the way for geometry to encode intent rather than mere lines. In parallel, Hanratty’s PRONTO offered commercial immediacy, tightening the feedback loop between ideas and the chips produced at the mill. The productive tension between the two streams established a template still with us: a high-level, intent-rich description compiled to device-specific instructions via a maturing toolchain of postprocessors and controllers.

This is more than a footnote; it is the pattern for CAD/CAM integration ever since. Whether one examines a modern feature tree in a solid modeler or an associative toolpath update after a design change, the operating principle echoes APT and PRONTO: describe relationships in software, then rely on stable transformations and verified postprocessing to execute. Early on, this forced attention on data structures that could survive translation—from interactive drafting terminals to NC tapes and back again for revisions. It also birthed the culture of extensive verification: backplotting, simulation, and, later, full-on material removal simulation all descend from the realization that code now owns the process narrative. The pioneers in this era did not only script motions; they legitimized software as the place where manufacturing knowledge lives and evolves.

Bridging the drafting room and the shop: Joseph Gerber and the PC democratizers

Joseph Gerber, through Gerber Scientific, built a commercial bridge from manual drafting to computer-controlled output with photoplotters, automated drafting machines, and the now-ubiquitous Gerber format for PCB manufacturing. In an age before standard raster devices and uniform graphics APIs, Gerber’s equipment and formats taught the industry that the fidelity of output mattered as much as on-screen fidelity. Reliability, calibration, and repeatability were not optional—they were the success criteria. That pragmatism shaped expectations for CAD output: hardcopy needed to be right, every time; numerical files needed to be readable by real machines without hand-editing. It is hard to overstate how much this hardware-driven reliability culture influenced software design choices about precision, tolerances, and display-to-device consistency.

Years later, Mike Riddle and John Walker carried a related torch into the PC era. Riddle’s Interact became the seed for AutoCAD at Autodesk, where Walker and co-founders built a pragmatic, scriptable 2D system that ran on commodity DOS hardware. The achievement was not a leap in theoretical geometry but in portability across mass-market PCs, a text-first command interface friendly to automation, and data formats like DXF that allowed a de facto neutral exchange. That choice catalyzed broad adoption in architecture, engineering, and fabrication shops that could not afford minicomputers or proprietary terminals. Scriptability and openness made it easier for resellers and customers to build micro-ecosystems of add-ons, echoes of the earlier licensing culture but now distributed across thousands of small firms. Democratization was not simply price; it was the decision to make automation and exchange accessible to anyone with an off-the-shelf PC.

Geometry kernels and solid modeling: Braid’s Shape Data meets Voelcker and Requicha

While ADAM and its descendants blended drafting with NC, another foundational layer was taking form in the UK at Cambridge: Ian Braid, with colleagues including Bruce Hillyard and Charles Lang at Shape Data, pushed boundary-representation (B-rep) modeling from academic prototypes (notably BUILD) into commercial kernels. ROMULUS, among the first commercial B-reps, and later the Parasolid kernel, codified how edges, faces, and topological consistency should behave. Crucially, this was “kernelized” geometry: a licensable engine abstracted from any one CAD application. The echo with Hanratty’s approach is unmistakable. By making the geometry layer portable and licensable, Shape Data fostered a marketplace where many vendors could ship robust solids without each re-deriving topology management, Boolean robustness, and geometric evaluation. Parasolid’s later life—shaped through ownership changes including Evans & Sutherland and then United/Unigraphics (eventually Siemens)—illustrates how a stable, vendor-neutral kernel can outlast branding shifts and even architectural transitions in host systems.

In the United States, Herbert Voelcker and Aristides Requicha (in a trajectory spanning Cornell and the University of Southern California) provided the formalism that held these kernels to account. Through PADL research, they investigated regularized set operations, validity conditions, and semantics for constructive solid geometry (CSG). Their work provided the proofs and constraints that told implementers when a Boolean should be legal, where singularities must be managed, and how to avoid “dangling” topology. The combination—Braid’s commercialization of B-rep kernels with Voelcker and Requicha’s mathematical guardrails—made modern solid modeling credible. It meant that toolmakers could promise not just expressive power but correctness: parts would be watertight, Booleans would be predictable, and downstream processes like meshing, machining, and analysis would receive models that behaved like physical objects.

Visualization, surfacing, and platform durability: E&S, Bézier and de Casteljau, and United/Unigraphics

Evans & Sutherland (E&S), co-founded by David Evans and Ivan Sutherland after Sutherland’s epochal Sketchpad work, raised interactive graphics performance to a level that let CAD users expect smooth pans, zooms, and shaded previews. Their framebuffers, graphics libraries, and visualization pipelines seeded both hardware products and mental models: users began to assume that geometry could be explored in real time. That expectation looped back into CAD/CAM software design, where rendering and selection precision had to respect engineering tolerances, not just visual plausibility. The growth of workstation vendors and E&S-influenced pipelines also encouraged standardized graphics abstractions, making it more feasible for portable CAD code to target multiple displays and input devices without rewriting core geometry.

On the geometry side, automotive stylists at Renault and Citroën had already been refining the mathematics of curves and surfaces. Pierre Bézier systematized parametric polynomial curves and surfaces—eventually bearing his name—while Paul de Casteljau developed the subdivision algorithm that underlies their evaluation. Although de Casteljau’s work remained internal for years, the combined effect matured industrial surfacing: smooth, fair, and manufacturable bodies that could be evaluated, offset, and sectioned. Hanratty-era systems absorbed these representations as surface libraries expanded, linking visual aesthetics to feasible tooling paths and panel development. Meanwhile, United Computing’s trajectory—UNIAPT to Unigraphics, acquisition by McDonnell Douglas, later life under EDS and then Siemens—illustrates platform durability born of integration. United fused CAM roots with increasingly interactive CAD on minicomputers and then workstations, reportedly leveraging Hanratty’s code in its early days and later investing heavily in geometry and process integration. Anchored by strong postprocessing and, in later decades, robust kernel and data management choices, Unigraphics/UG/NX became a byword for large-scale, production-centric CAD/CAM. The through-line binding these strands is ecosystems thinking: graphics that honor precision, surfacing that respects manufacturability, and platforms that carry process knowledge forward across hardware generations.

Conclusion

Commercialization architecture as the overlooked invention

It is tempting to tell CAD history as a march of on-screen marvels. But the overlooked lesson is architectural and commercial: the winning bets were portability, licensability, and integration with manufacturing. PRONTO and APT legitimized software as the repository of manufacturing intent. Hanratty’s ADAM showed how one portable codebase could fuse drafting, geometry, and NC and then be licensed widely to accelerate multiple vendors at once. Shape Data’s ROMULUS and Parasolid reinforced the kernelization model, letting many firms share a proven geometric core. Visualization pipelines from Evans & Sutherland, coupled with the curve/surface mathematics of Bézier and de Casteljau, raised expectations for interactivity and finish quality while staying compatible with tooling realities. Researchers like Voelcker and Requicha supplied the formal integrity that kept models trustworthy under Boolean and topological operations. And democratizers like Mike Riddle and John Walker proved that portability across commodity PCs, coupled with scriptability and open exchange, could transform markets as surely as any new algorithm.

This commercialization architecture was not incidental: it disciplined the industry to value reusable components, neutral exchange, and downstream robustness. Even standards like IGES and, later, STEP can be understood as expressions of the same ethos: data should survive vendor changes, hardware refreshes, and long product lifecycles. In a field where success is measured by parts delivered and buildings erected, the most consequential design decisions are often those that make tools predictable to integrate, easy to license, and reliable under change. That is why the industry’s center of gravity remains with those who build for the whole pipeline, not only the screen.

Ecosystems win: a durable takeaway for the next generation

Hanratty’s arc—from PRONTO to ADAM to ANVIL—is a case study in the power of designing for reuse: a single, portable foundation can seed an industry. The “little-knowns” supplied the math, semantics, and hardware pathways that let this foundation become a marketplace. And the vendors who flourished most did so by absorbing, licensing, and contributing to that shared substrate. As new waves arrive—computational design, cloud collaboration, real-time simulation, additive manufacturing—the same lesson applies. The tools that will endure are those that honor interfaces, keep kernels neutral, and maintain fidelity from intent to production. In practice, that means:

- Choosing data models and kernels that travel across platforms and vendors without semantic loss.

- Treating NC, simulation, and visualization as peers in the stack, not afterthoughts.

- Licensing and contributing to components where shared investment yields robustness—geometry kernels, translators, postprocessors.

- Designing UIs and automation hooks that let organizations encode process knowledge directly into models.

These are not romantic ideas, but they are the ones that last. The names on boxes will continue to change; the hardware will keep shifting under our feet. Yet the value endures wherever engineers and toolmakers commit to portability, licensability, and integrated manufacturing workflows. That is the through-line from the 1950s to now—and it is the surest compass for what comes next.

Also in Design News

Metadata-Driven Discovery in Engineering: Knowledge Graphs, Hybrid Indexing, and Explainable Search

April 14, 2026 13 min read

Read MoreSubscribe

Sign up to get the latest on sales, new releases and more …