Your Cart is Empty

Customer Testimonials

-

"Great customer service. The folks at Novedge were super helpful in navigating a somewhat complicated order including software upgrades and serial numbers in various stages of inactivity. They were friendly and helpful throughout the process.."

Ruben Ruckmark

"Quick & very helpful. We have been using Novedge for years and are very happy with their quick service when we need to make a purchase and excellent support resolving any issues."

Will Woodson

"Scott is the best. He reminds me about subscriptions dates, guides me in the correct direction for updates. He always responds promptly to me. He is literally the reason I continue to work with Novedge and will do so in the future."

Edward Mchugh

"Calvin Lok is “the man”. After my purchase of Sketchup 2021, he called me and provided step-by-step instructions to ease me through difficulties I was having with the setup of my new software."

Mike Borzage

Design Software History: From PDM Vaults to Enterprise PLM: Integration, Standards, and the Digital Thread

March 13, 2026 15 min read

From PDM Vaults to Enterprise PLM: Why Integration Became Inevitable

1980s–1990s File-Centric Foundations

Before enterprises talked about platforms and digital threads, engineering teams lived inside file vaults. The late-1980s and 1990s era saw the rise of Product Data Management (PDM) as a pragmatic response to the chaos of proliferating CAD files, incomplete check-ins, and lost revisions. EDS/UGS brought iMAN to large OEMs who needed controlled check-in/out and repeatable release processes as CAD moved from drafting rooms to global programs. SDRC’s Metaphase carved a niche as a configurable vault and change system that could cross-pollinate complex programs. PTC, expanding from Pro/ENGINEER’s parametric dominance, introduced Pro/PDM and later Pro/INTRALINK to put discipline around versioning, dependencies, and part-family reuse. In the broader market, SmarTeam (an Israeli startup later aligned with Dassault Systèmes) and MatrixOne (led by Paul Giaconia and used widely in high-tech) demonstrated that flexible metadata schemas and browser-based clients could democratize vaulting beyond a single CAD ecosystem. These systems formalized the core grammar of engineering release practice—workspace vs. vault, baseline vs. iteration, late-binding assemblies, and state-controlled access. Yet, they were explicitly file-centric: geometry and drawings reigned supreme, item masters were vestigial, and cross-discipline traceability—if it existed at all—was bolted on. In short, PDM solved the immediate pain of controlling CAD artifacts, but it left lifecycle questions—configuration options, supplier splits, and service impacts—largely unanswered, setting the stage for a shift from vaults to PLM.

- Core PDM verbs: check-in/out, revise, baseline, release, and re-use via approved libraries.

- Notable products: iMAN (EDS/UGS), Metaphase (SDRC), Pro/PDM and Pro/INTRALINK (PTC), SmarTeam, MatrixOne.

- Primary value: reduce file chaos, ensure drawing/part consistency, and enforce gated approvals.

Enterprise Pressures Beyond PDM

As the 1990s rolled into the 2000s, the enterprise realities of globalized supply chains overwhelmed file-first thinking. Tiered development across North America, Europe, and Asia introduced effectivity windows, late supplier substitutions, and country-specific compliance requirements that could not be tacked onto a vault without buckling it. Variant-rich product lines demanded configuration rules that related options to serial ranges and plant routes, driving the notion of a 150% BOM—a super-set structure from which specific variants are derived by applying options and effectivity. Meanwhile, engineering stopped being purely mechanical. Electrical design flowed in with OrCAD, Mentor Graphics, and Cadence; embedded software and calibration data started dictating schedules. That put pressure on PDM to talk to ECAD libraries, link firmware baselines, and participate in quality workflows spanning FMEAs, CAPAs, and PPAP submissions. Regulatory regimes—REACH and RoHS for substances, ITAR/EAR for export control, ISO/TS for automotive—ratcheted up auditability and retention mandates. PDM’s local schema customizations could not keep pace with the choreography demanded by ECO/ECN processes that now traversed design centers, contract manufacturers, and service organizations. The answer was to elevate data above files: durable item masters, formalized change objects, governed processes, and cross-domain linkages that reinterpreted “product” as a living, evolving system. That conceptual leap is what transformed PDM into Product Lifecycle Management (PLM).

- Globalization pressure points: supplier offloading, late localization, and serialization traceability.

- Cross-discipline complexity: MCAD–ECAD–software co-design and synchronized release cycles.

- Compliance escalations: substances, export control, and audit trails spanning years or decades.

Vendor Pivots and Alliances

Vendors read the tea leaves and pivoted hard to lifecycle scope. PTC catalyzed its transition by acquiring Windchill Technology in 1998; with Jim Heppelmann later steering strategy, Windchill reframed data as items, processes, and configurations rather than just CAD files. UGS, under Tony Affuso and later Chuck Grindstaff, unified iMAN and SDRC’s Metaphase into Teamcenter, positioning it as an enterprise backbone that could manage multi-CAD, requirements, and manufacturing data while keeping the JT visualization format open. Dassault Systèmes, led by Bernard Charlès, extended CATIA with ENOVIA and deepened its enterprise handprint by acquiring MatrixOne in 2006, ultimately setting the stage for the 3DEXPERIENCE platform’s shared ontology across CATIA, Simulia, and Delmia. Autodesk pursued a cloud-first entry with PLM 360 (later Fusion 360 Manage), while Aras Innovator, founded by Peter Schroer, championed an upgradeable, model-based core that encouraged federation over rip-and-replace. The long-standing IBM–Dassault alliance epitomized the shift from selling CAD seats to selling end-to-end enterprise process platforms: pre-integrated stacks, industry templates, and global delivery muscle that spoke CIO language. In all cases, the narrative coalesced around moving beyond vaults toward governed relationships—linking requirements to configurations, configurations to processes, processes to suppliers, and suppliers to service feedback—forming the early contours of what would later be branded as the digital thread.

- PTC Windchill: process-centric PLM with robust change and configuration.

- UGS Teamcenter: unification strategy and multi-CAD scale, later evolving to Teamcenter X in SaaS form.

- Dassault ENOVIA + MatrixOne: enterprise scope and a shared platform model under 3DEXPERIENCE.

- Autodesk Fusion 360 Manage and Aras Innovator: cloud-first and open, upgradeable alternatives.

How Integration Works: Architectures, Data Models, and Standards

In-CAD PLM Adapters

Integration starts at the authoring front line. In-CAD PLM adapters embed clients into CATIA, Pro/ENGINEER/Creo, NX, SolidWorks, and others to collapse distance between modeling activity and enterprise governance. The adapter’s job is to map CAD-native constructs—parts, assemblies, drawings, attributes, family tables—into PLM item masters, relationships, and lifecycle states without forcing designers out of flow. Designers can initiate change tasks, link to problem reports, and push maturity states (e.g., In Work to Released) directly from their modeling session, while the adapter ensures dependency awareness and check-in atomicity to prevent orphaned links. Multi-CAD adds another layer: neutral identifiers insulate enterprise structures from CAD-specific naming habits; cross-tool dependency tracking keeps an NX assembly synchronized with supplier SolidWorks components; and part-family logic manages both parametric variability and catalog parts. Critically, these adapters are not just UI veneers. They enforce referential integrity, apply attribute validation, and capture who-touched-what for audit trails—key in regulated environments. Today’s adapters increasingly use REST APIs and event callbacks, replacing older COM/CORBA bridges, enabling asynchronous updates, and improving resilience for globally distributed teams that cannot rely on LAN-like latencies. The result is an authoring experience that feels native to the CAD tool yet is inseparably bound to enterprise lifecycle control.

- Core functions: part/assembly check-in, attribute mapping, lifecycle state transitions, and change participation.

- Multi-CAD patterns: neutral IDs, family table harmonization, and cross-tool dependency awareness.

- Reliability: transactional check-ins and validation ensure no broken references reach PLM.

Product Structure and BOM Coherence

The beating heart of PLM is the product structure, and keeping it coherent across engineering and manufacturing is a perennial challenge. CAD assemblies reflect design intent—sub-assemblies that ease modeling, skeletons for top-down control, and reference-only items for positioning—while manufacturing BOMs reflect how things are built: make/buy splits, phantom assemblies, and plant-specific routings. PLM mediates with a super-set called the 150% BOM, an inclusive structure that encodes all potential options and regional variations. Options and effectivity rules (serial number, date, plant) carve this into exact 100% views for orders. Engineering changes (ECO/ECN) propagate across these views using change objects with rationale, impact analysis, and cut-in logic; the system preserves as-released and as-maintained snapshots for traceability. Crucially, PLM must reconcile where CAD structure diverges from mBOM: combining design-only sub-assemblies, expanding fastener kits into purchase items, or translating CAD placeholders into off-the-shelf parts. Doing this at scale requires configuration engines, structure comparison tools, and role-aware views for engineering, procurement, and manufacturing. When it works, PLM becomes the arbiter of truth: CAD stays expressive, mBOM stays executable, and both remain synchronized through changes, variants, and lifecycle states.

- Reconciliation goals: preserve design intent while ensuring manufacturability and procurement clarity.

- Change control: ECO/ECN with controlled cut-in, effectivity, and closed-loop actions into ERP/MES.

- Traceability: as-designed, as-built, and as-maintained snapshots underpin service and compliance.

Geometry and Visualization Pipelines

Enterprises need both precision and scalability. Authoring geometry is preserved as the master—precise B-rep, parametric features, sketches, and constraints—while downstream consumers need lightweight visualization for Digital Mock-Up (DMU), clash detection, and shop-floor guidance. The common pattern is master/derived separation: authoring data stays in native CAD and STEP repositories; visualization flows are generated as light formats like JT (ISO 14306), 3DXML, and DWF, enabling massive assemblies to be loaded quickly without full CAD licenses. Long-term archiving and interoperability rest on STEP, with AP203 and AP214 converging into AP242, which formalizes PMI for Model-Based Definition (MBD), including semantic tolerances rather than just dumb annotations. LOTAR initiatives define how to preserve these artifacts for decades, addressing geometry, PMI, and evolution of standards. In document-centric streams, 3D PDF (PRC/U3D) supports downstream consumption where IT barriers preclude thick clients. Metrology integration is cemented via QIF from the DMSC, creating a path from MBD to inspection planning and results capture so that measurement data closes the loop into change analysis and quality dashboards. The pipeline must be version-aware and secure, ensuring that visualization derivatives are consistent with master revisions and respecting export-control rules even at the tessellation level.

- Master vs. derived: keep B-rep authoritative; generate light DMU for scale and ubiquity.

- Standards: STEP AP242 for MBD/PMI, JT for visualization, LOTAR for retention, QIF for metrology.

- Governance: derivative regeneration, watermarking, and access policies aligned with lifecycle states.

Cross-Domain Integration

No product is purely mechanical any longer. MCAD–ECAD collaboration matured through EDMD/IDX, allowing enclosure and board teams to iterate board outlines, component keep-outs, and connector placements without throwing spreadsheets over the wall. On the software side, requirements and ALM linkages became indispensable: IBM DOORS and Jama define intent; Jira or Azure DevOps track implementation work; PLM links configurations so that a specific firmware baseline is tied to a hardware configuration and released feature set. The OSLC family of specs underpins these cross-tool relationships with typed links and traceability graphs that survive system upgrades. ERP/MES integration remains the nerve center for execution: SAP and Oracle must receive item masters, change cut-ins, and routing updates in time to avoid building yesterday’s configuration. Governance defines source of truth for each artifact—PLM for items and structures, ERP for costing and execution, MES for as-built records—while synchronization patterns (publish/subscribe, handshakes, and reconciliation reports) ensure consistency. Across all of this, partner and supplier collaboration is mediated by secure portals, supplier-unique namespaces, and data minimization so contract manufacturers see what they must—but not intellectual property they do not need.

- MCAD–ECAD: IDX rounds, component clearance checks, and lifecycle synchronization for libraries.

- ALM links: requirements-to-test coverage and change impact from code back to hardware variants.

- ERP/MES sync: item/routing effectivity, demand-driven updates, and reconciliation dashboards.

Platform and Security Plumbing

Behind the scenes, PLM grew up with the enterprise middleware it lives on. Early adapters toggled between COM and CORBA; the 2000s were SOAP-heavy with WSDL-governed contracts; and the past decade shifted to REST and now GraphQL for cleaner payloads and incremental queries. Event-driven architectures are replacing nightly batch jobs: message buses, ESB patterns, and lightweight webhooks help PLM broadcast configuration changes to ALM, ERP, and analytics systems in near real time. Identity and access are non-negotiable. SSO via SAML or OAuth/OIDC allows least-privilege enforcement and auditability across supplier ecosystems. Export control adds geo-fencing and attribute tagging so that metadata and derivatives inherit restrictions; at scale, this means policy evaluation at every API call, not just UI sessions. Microservices carve out BOM engines, visualization services, or change workflows as independently scalable components, while container orchestration handles high-variance loads like visualization generation. The API story matters long after go-live: backward compatibility minimizes the cost of upgrades and feeds into the platform’s promise of lower lifecycle TCO. The north star is simple but hard: make integration explicit, observable, and secure so that the enterprise can trust the digital thread it is building, not just the geometry it is modeling.

- API evolution: COM/CORBA → SOAP → REST/GraphQL with versioned, documented contracts.

- Events: ESB to microservices plus webhooks for near-real-time change propagation.

- Security: SSO, fine-grained authorization, export-control enforcement, and end-to-end audit trails.

Vendor Strategies and the March to Cloud, MBSE, and the Digital Thread

Dassault Systèmes: ENOVIA, MatrixOne, and 3DEXPERIENCE

Under Bernard Charlès, Dassault Systèmes evolved from a CATIA powerhouse into a platform company advocating a unified experience layer. ENOVIA began as CATIA’s data backbone, but the 2006 acquisition of MatrixOne expanded its enterprise workflows, security models, and flexible schemas favored by high-tech and life sciences. The culmination is the 3DEXPERIENCE platform: a shared data model that orchestrates CATIA for design, Simulia for simulation, and Delmia for manufacturing, wrapped with analytics and social collaboration surfaces. Dassault’s RFLP approach—Requirements, Functional, Logical, Physical—lays a foundation for MBSE, aligning systems engineering with downstream implementations. Industry templates matter: aerospace programs inherit governance models aligned with DO-178/254 and ITAR; process industries leverage recipe-centric data models and compliance workflows for GxP. On the standards front, Dassault has walked a pragmatic line—supporting STEP AP242 while pushing 3DXML and strong PMI for MBD. With cloud footprints expanding, the company blends private cloud deployments for regulated customers with growing SaaS offerings, aiming to provide a consistent data model and role-based apps across all tiers. The strategy is unmistakable: connect disciplines around a common ontology so configuration-aware simulation and manufacturing can ride the same digital thread that began in design.

- Key levers: RFLP for MBSE, industry templates, and unified simulation/manufacturing context.

- Data strategy: shared ontology across design, simulation, and production planning.

- Cloud stance: hybrid with increasing SaaS, preserving enterprise-grade governance.

Siemens (UGS Lineage): Teamcenter, JT Openness, and Teamcenter X

Siemens inherited UGS’s dual-lineage—iMAN and Metaphase—consolidated as Teamcenter, and doubled down on openness around visualization with JT as a widely adopted, ISO-standardized lightweight format. With Chuck Grindstaff and later Tony Hemmelgarn steering strategy, Siemens emphasized scale, multi-CAD, and an execution-proximate footprint that speaks natively to plant and automation stacks. The acquisition of Mentor Graphics in 2017 put ECAD and embedded systems squarely inside the same narrative as NX and Simcenter; cross-domain flows now pivot on ECAD–MCAD co-design with robust library governance and mechatronic BOMs. Teamcenter X extends this to SaaS, reducing infrastructure overhead while retaining the configurability enterprises expect. Siemens positions simulation as integral to configuration—Simcenter can consume configuration rules from PLM and publish verification artifacts back—pushing toward configuration-aware simulation and validation loops. Standards support is practical and strong: AP242 for STEP, QIF for metrology integration, and deep IDX pipelines. The broader Siemens portfolio—automation, MES (Opcenter), and industrial IoT (Mindsphere)—gives the company vertical leverage to close the loop from requirements to line performance.

- Openness: JT for visualization, strong multi-CAD integration patterns.

- Mechatronics: ECAD/MCAD synergy accelerated by Mentor Graphics.

- Cloud: Teamcenter X for SaaS without losing enterprise configurability.

PTC: Windchill, Integrity/Codebeamer, ThingWorx, Onshape, and Arena

PTC’s PLM journey began with the Windchill acquisition and matured under Jim Heppelmann into a connected strategy spanning design, software, and IoT. Windchill serves as the backbone for robust change control and configuration management across Creo and multi-CAD; integrations with Integrity (now Windchill RV&S) and Codebeamer bridge into ALM and requirements. With ThingWorx, PTC linked PLM to operational telemetry, turning the digital thread into a feedback loop that informs service and design updates. The acquisition of Onshape (cloud-native CAD) in 2019 and Arena Solutions (cloud PLM) in 2020 set a clear SaaS trajectory: unify CAD–PLM on cloud infrastructure, leverage real-time collaboration, and reduce upgrade friction. PTC emphasizes MBD and MBSE compatibility, positioning Creo for semantic PMI and Windchill for downstream consumption of those annotations, including QIF pathways to metrology. The company’s narrative is increasingly about speed to value—preconfigured apps, role-based tasks, and out-of-the-box integrations—with an eye toward AI-assisted impact analysis across change proposals. The connective tissue—APIs, event buses, and secure federation with ERP—remains central, as PTC aims to let customers evolve from vaulting to closed-loop lifecycle management without losing their existing investments.

- Backbone: Windchill for configuration and change at enterprise scale.

- Cloud-native: Onshape + Arena toward unified SaaS CAD–PLM.

- Feedback loops: ThingWorx bridges product usage data to design decisions.

Autodesk: Fusion 360 Manage and the Convergence Vision

Autodesk entered PLM with a contrarian bet: deliver it from the cloud first. PLM 360 launched as a configurable, browser-based alternative that minimized infrastructure barriers for companies already entrenched in the Autodesk ecosystem. It has matured into Fusion 360 Manage, part of a convergence story where Fusion 360 spans CAD/CAM/CAE, and where APIs support configurators, CPQ partners, and supplier collaboration. Autodesk’s strength lies in democratization—bringing sophisticated lifecycle control to a broader tier of manufacturers and innovators who cannot carry the cost of legacy stack complexity. Visualization via DWF and broad 3D viewer support, combined with integrations into ERP and MES partners, aim to keep the tool approachable while progressively exposing advanced constructs like options/effectivity and ECO workflows. The cloud stance is unequivocal: embrace SaaS updates, prioritize ease of deployment, and build extensibility via REST APIs and low-code connectors. As MBD adoption grows, Autodesk’s challenge—and opportunity—is to deepen semantic PMI flows and strengthen long-term archival strategies, ensuring that design to production to service remains navigable without heavyweight governance standing in the way of agility.

- Cloud-first: low barrier to entry and rapid rollout across teams and suppliers.

- Convergence: CAD/CAM/CAE + PLM alignment inside Fusion 360’s ecosystem.

- Extensibility: REST APIs, app partners, and connectors for configurators and ERP.

Aras Innovator: Model-Based, Open, and Upgradeable

Aras Innovator, founded by Peter Schroer, staked an identity on three pillars that resonated with enterprises frustrated by brittle customizations: a model-based data and process layer, openness, and a commitment to upgrades even for heavily tailored deployments. Instead of forcing customers into a fixed schema, Aras treats data definitions and workflows as editable models that survive version-to-version transitions. That architecture encourages federation—tying together legacy PDM/PLM silos, specialized engineering databases, and even homegrown change systems—into a workable, governed whole. The platform’s REST APIs and event services support incremental rollout patterns, where BOM and change might go first, followed by quality and supplier management. Aras’s community and subscription model cultivated a partner ecosystem that prioritizes re-use over one-off custom code, bringing both speed and sustainability to deployments. As MBSE takes root, Aras emphasizes cross-domain traceability—requirements, risks, verifications—while leaving CAD neutrality intact. The company’s pitch is blunt: reduce lifecycle cost by taming customization debt, keep the door open for new tools via standards (AP242, QIF, OSLC), and make upgrades predictable so the platform evolves alongside the business rather than calcifying.

- Core value: upgradeable customizations via model-based definitions and services.

- Federation: integrate rather than replace, respecting existing investments.

- Traceability: requirements-to-verification chains across mechanics, electronics, and software.

Wider Enterprise Context: ERP Gravity, Standards Consortia, and Industry Motifs

Even the strongest PLM vendors orbit around ERP gravity wells. SAP, under the visionary influence of Hasso Plattner, and Oracle, fortified by the Agile PLM acquisition, define how item masters, costing, and execution data must flow. That reality drives PLM to synchronize effectivity precisely and to respect ERP as the execution system of record. Standards consortia—ProSTEP iViP, AFNeT, ISO committees—carry the industry forward on AP242, JT openness, and LOTAR practices, while OSLC advances cross-tool traceability. These are not academic exercises: without them, multi-vendor toolchains and long-term retention collapse under their own brittleness. Meanwhile, industry motifs are converging. The digital thread links requirements, configurations, simulations, and as-built telemetry, while digital twin ambitions pull operational data into design decisions. Configuration-aware simulation aims to verify not just a design in the abstract, but the precise variant that will ship to a given region on a given date. MBSE promises that requirements flow naturally to verification and validation, reducing late surprises. Taken together, this wider context raises the bar: PLM can no longer be a better vault; it must be the enterprise nervous system that co-evolves with ERP, ALM, and MES without locking customers into brittle, one-way integrations.

- ERP–PLM gravity: execution vs. design authority lines drawn and respected.

- Standards: AP242, JT, LOTAR, and OSLC as preconditions for sustainable ecosystems.

- Industry motifs: digital thread/twin, configuration-aware simulation, and MBSE maturity.

Conclusion

From Geometry to the Enterprise Nervous System

The quiet revolution of PLM integration transformed CAD from a sophisticated drafting board into an enterprise nervous system. What began as vaulting geometry graduated into governing relationships among requirements, configurations, assemblies, routings, and service histories. The result is visibility: engineering can assess change impact beyond the part, manufacturing can plan variant-specific builds with confidence, quality can close the loop with CAPAs that reach back to design intent, and service can align as-maintained records with the as-designed baseline. This shift only works if data models treat the product as a living system, not a bundle of files, and if change objects carry context—who requested it, why it matters, what it affects, and when it takes effect. Integration is the mechanism, but the outcome is behavioral: decisions are made with traceability, governance is enforced without paralyzing speed, and the organization stops arguing about whose spreadsheet is correct. In this light, PLM is not a destination but an operating model: a continuous coordination engine that keeps requirements, design, manufacturing, quality, and service synchronized as reality changes under their feet.

- Elevation: from file control to lifecycle governance and cross-domain coherence.

- Outcome: shared context for faster, safer decisions across functions.

- Discipline: change processes that bind intent to effectivity and execution.

What Proved Decisive

Certain enablers separated successful PLM programs from perpetual pilot mode. Robust in-CAD adapters embedded lifecycle into daily work, eliminating shadow structures and broken dependencies. Scalable product structures—150% BOMs with clear options/effectivity semantics—preserved engineering intent while making manufacturing executable, with change objects driving controlled propagation. Visualization pipelines paired lightweight DMU with authoritative masters, giving stakeholders speed without sacrificing precision. Open standards—AP242 for geometry and PMI, JT for visualization, QIF for metrology—made multi-vendor flows and long-term retention tractable rather than wishful. On the platform side, secure, upgradeable APIs and event-driven patterns balanced customization against lifecycle cost, allowing enterprises to evolve rather than ossify. Finally, identity and policy enforcement (SSO, export control, audit trails) scaled collaboration without compromising IP. These are not optional extras; they are the backbone of any sustainable digital thread and the reason modern PLM can be both integrated and adaptable in the face of relentless product complexity and regulatory scrutiny.

- Adapters: lifecycle embedded where design actually happens.

- Structures: configuration engines that reconcile eBOM and mBOM at scale.

- Standards: interoperability and archiving that survive tool churn.

- Platforms: secure, observable, and upgradeable integration contracts.

Where It’s Heading

The next arc is already visible. Cloud-native CAD–PLM stacks will mainstream, shrinking deployment frictions and unlocking real-time collaboration that blurs the boundary between authoring and governance. Event-driven digital threads will amplify impact analysis, with graph-based configuration models mapping relationships that span mechanics, electronics, software, and service so change effects can be simulated before they are enacted. Richer semantic MBD—beyond graphic PMI—will link tolerances to inspection plans via QIF and flow into adaptive manufacturing instructions and automated conformance checks. Federation across ALM/PLM/ERP will tighten, tempered by policy-based access and data minimization so that suppliers collaborate at scale without IP leakage. AI will assist—not replace—engineering judgment, accelerating variant comparisons, suggesting change packages, and flagging downstream risks. The limiting factors will be governance, security, and standardization cadence; the accelerants will be open APIs, composable apps, and communities that turn best practices into packaged patterns. If the last era was about escaping file chaos, the next will be about making lifecycle intelligence ambient—available at every decision point, from requirements definition to service decommissioning.

- Cloud: elastic scale and continuous delivery for CAD–PLM.

- Graphs: configuration models that power impact analysis and variant reasoning.

- Semantics: MBD to metrology to manufacturing with executable intent.

- AI: assistive planning and traceability, bounded by rigorous governance.

Also in Design News

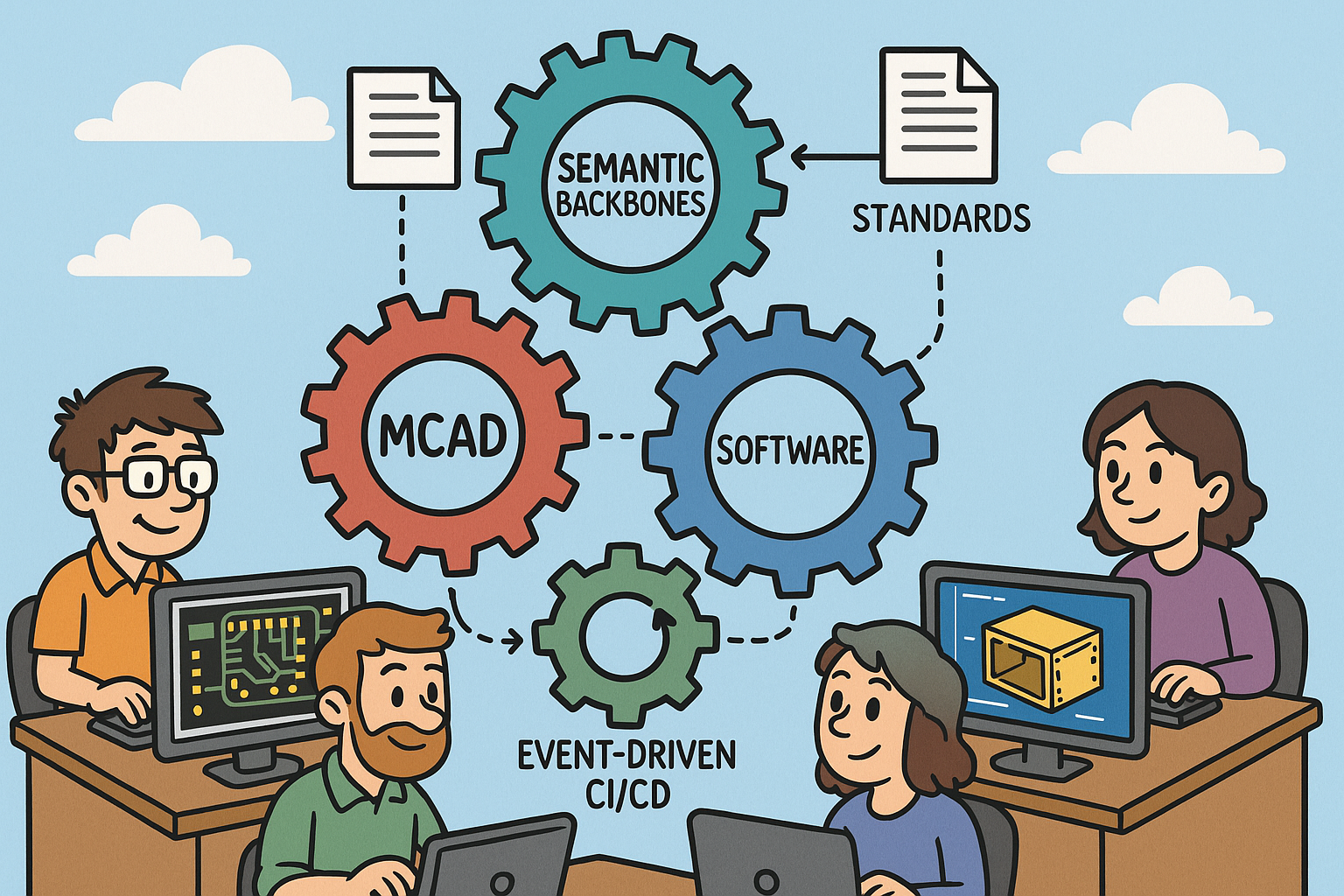

Integrated ECAD, MCAD, and Software: Semantic Backbones, Standards, and Event-Driven CI/CD

March 13, 2026 12 min read

Read More

Cinema 4D Tip: Voronoi Fracture Workflow for Art-Directable Breaks in Cinema 4D

March 13, 2026 2 min read

Read MoreSubscribe

Sign up to get the latest on sales, new releases and more …