Your Cart is Empty

Customer Testimonials

-

"Great customer service. The folks at Novedge were super helpful in navigating a somewhat complicated order including software upgrades and serial numbers in various stages of inactivity. They were friendly and helpful throughout the process.."

Ruben Ruckmark

"Quick & very helpful. We have been using Novedge for years and are very happy with their quick service when we need to make a purchase and excellent support resolving any issues."

Will Woodson

"Scott is the best. He reminds me about subscriptions dates, guides me in the correct direction for updates. He always responds promptly to me. He is literally the reason I continue to work with Novedge and will do so in the future."

Edward Mchugh

"Calvin Lok is “the man”. After my purchase of Sketchup 2021, he called me and provided step-by-step instructions to ease me through difficulties I was having with the setup of my new software."

Mike Borzage

Design Software History: From GD&T to Executable PMI: The Evolution of Tolerances in CAD, CAM, and Metrology

March 29, 2026 13 min read

A very short introduction

Purpose and scope

The story of tolerances in design software tracks the broader arc of digital engineering: from inked symbols on vellum to semantic PMI that drives machining, inspection, and release. Over roughly nine decades, geometric dimensioning and tolerancing (GD&T) has morphed from a drafting dialect into a computable language that platforms from Dassault Systèmes, Siemens, and PTC use to make, measure, and certify physical parts. This article follows that transformation with a focus on the enabling standards, the companies and people who industrialized them, and the algorithms that made it all practical in kernels and toolchains. It emphasizes three pivotal inflections: the formalization of positional tolerancing in mid‑century production; the migration from drawings to Model‑Based Definition (MBD); and the emergence of variation analysis and closed‑loop precision across CAD, CAM, and QA. Along the way, we will connect ASME Y14.5 and ISO GPS to STEP AP242, QIF, and the PMI‑aware CAM and metrology ecosystems that now operationalize this data. The goal is not nostalgia; it is to surface why tolerance semantics became the connective tissue of the modern digital thread, what still blocks interoperability and robustness, and where new research—probabilistic CAD and real‑time adaptive control—will push the next wave.

- Key premise: tolerances evolved from annotations to executable design intent.

- Key mechanism: standards and kernels converged on semantic, associative PMI.

- Key outcome: feedback‑rich loops now steer from CAD to certificate of conformance.

From paper tolerances to digital intent: origins and industry drivers

Pre‑history and GD&T foundations

Modern GD&T’s DNA traces to the 1930s–1950s when British engineer Stanley Parker, working at the Royal Torpedo Factory in Alexandria, Scotland, articulated the “true position” concept that recognized positional variation in terms of zones rather than coordinate limits. That shift—from limits on linear coordinates to a geometric zone—answered the manufacturing reality of hole patterns assembled with clearance and fasteners. Parker’s ideas, diffused post‑war through Royal Navy and later automotive and aerospace practices, seeded what would become the geometric language for interchangeability. In the United States, the Department of Defense and primes such as General Motors, Ford, Boeing, and North American Aviation needed systematic control of variation across sprawling supplier bases. Committees that later consolidated into ASME Y14.5 codified datums, features of size, and modifiers like MMC/LMC to reflect assembly function. Practitioners and educators—James D. Meadows, Don Day, Alex Krulikowski—popularized the rules through training and texts, accelerating industry uptake. By the 1960s–1990s, ASME Y14.5 (e.g., 1982, 1994 editions) and ISO 1101 (first published in 1983, with major 2004 and 2017 revisions) matured the language, aligning symbolics with functional tolerancing philosophy guided by ISO 8015’s independence principle. These standards reframed drawings from collections of dimensions to carriers of functionally defined geometry, setting the stage for computation.

- Foundational shift: from coordinate limits to tolerance zones and datums.

- Industrial driver: aerospace/automotive demand for interchangeability at scale.

- Pedagogy: widespread training by Meadows, Day, Krulikowski catalyzed consistency.

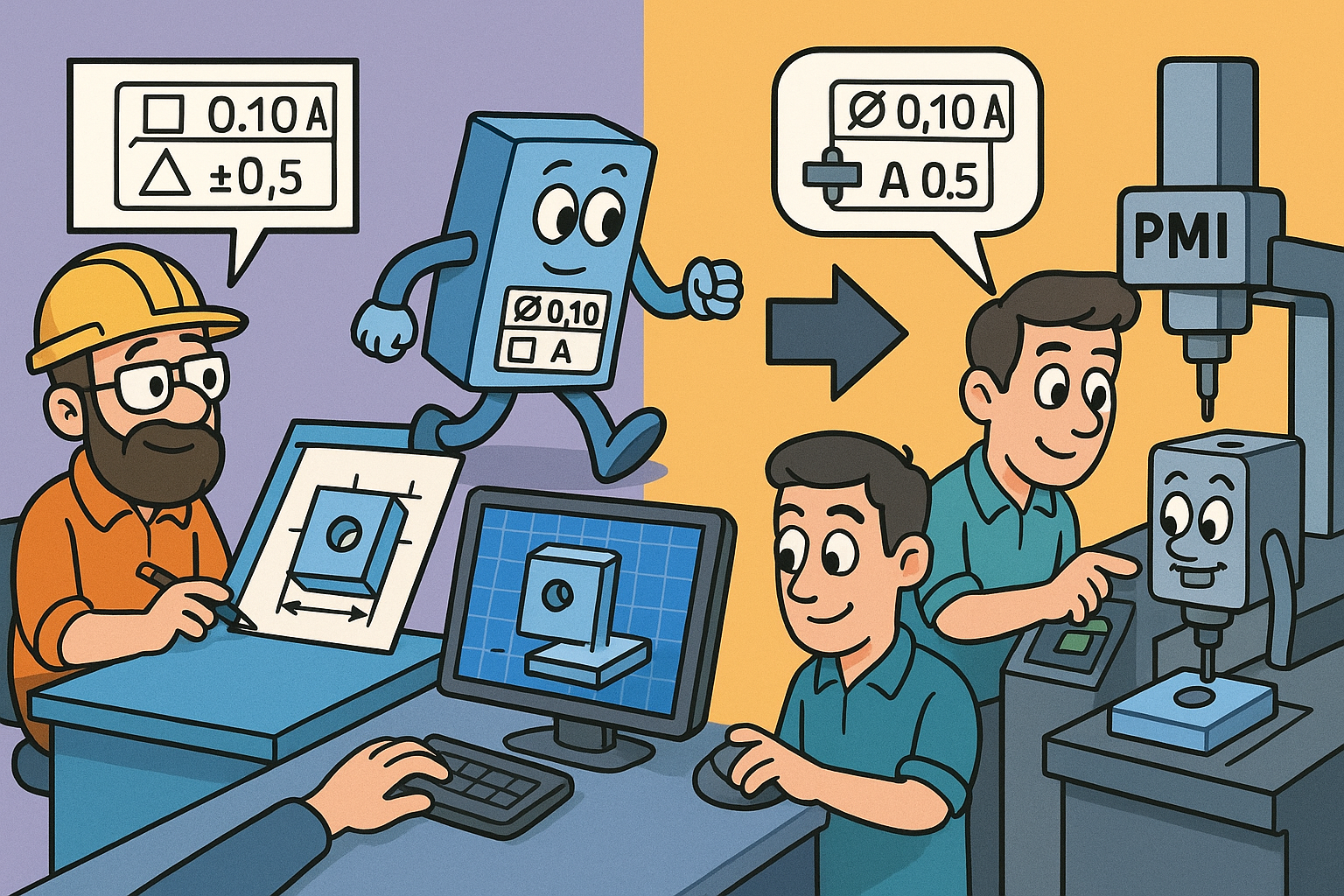

CAD arrives; tolerances leave the title block

As 3D CAD matured in the 1980s–1990s—think PTC Pro/ENGINEER (1988), Unigraphics (later Siemens NX), Dassault Systèmes CATIA, and IBM CADAM—the locus of design intent moved from 2D orthographic drafting to solid and surface models. Early workflows kept tolerances on the 2D drawing derived from the model: symbols were updated manually or with brittle links to model geometry. This was sufficient for print packages but constrained downstream automation: CAM programmers, CMM planners, and analysts often re‑interpreted tolerances, introducing variation from human transcription. In the late 1990s–2000s, two policy moves legitimized drawing‑less release. Boeing’s D6‑51991 specification (“Quality Assurance Standard for Digital Product Definition at Boeing Suppliers”) established requirements for model‑based product definition, including digital authority and supplier validation. The U.S. DoD’s MIL‑STD‑31000 (and later MIL‑STD‑31000A/B) defined Technical Data Packages with options for pure MBD, mandating that the 3D model and its Product and Manufacturing Information (PMI) could be the contractually authoritative definition. These mandates, paired with ASME Y14.41 and ISO 16792 on digital product definition practices, began moving tolerances off the title block and into the 3D model itself as associative PMI.

- Platforms: Pro/ENGINEER/Creo, Unigraphics/NX, CATIA V5/V6, CADAM set 3D norms.

- Policy: Boeing D6‑51991 and MIL‑STD‑31000B normalize drawingless release.

- Practice: ASME Y14.41 and ISO 16792 formalize digital authority and visualization rules.

Variation analysis becomes a software category

Once tolerances lived on models, engineers sought simulation tools that could propagate them through assemblies. This birthed a software niche in the 1990s: Variation Systems Analysis (VSA), later acquired into Siemens; CETOL 6σ by Sigmetrix (with early roots around Pro/ENGINEER ecosystems); and 3DCS by Dimensional Control Systems (DCS). These tools automate stack‑ups, datum scheme analysis, and assembly variation studies, weaving GD&T into kinematic joints and process sequences. They implement worst‑case, RSS, and Monte Carlo engines and provide graphical sensitivity to show which contributors dominate a key characteristic. Automotive OEMs like GM and Ford standardized on such platforms to close gaps between BIW (body‑in‑white) tolerances and assembly build variation; aerospace primes adopted them to ensure join‑up of large composite and metallic structures. Integration tightened: VSA and 3DCS embedded into CATIA and NX sessions; CETOL coupled closely with Creo. The category reframed tolerances from documentation to design variables with measurable risk, enabling allocations that balanced capability (Cp/Cpk), cost, and function. By turning PMI into parameters for simulation, variation analysis made the first credible business case for semantic tolerances: fewer surprises at build, faster root cause analysis, and traceable decisions linking drawings, models, and measurement.

- Core players: Siemens VSA, Sigmetrix CETOL 6σ, Dimensional Control Systems 3DCS.

- Method portfolio: worst‑case, RSS, Monte Carlo, kinematic constraints, datum mobility.

- Outcome: tolerances treated as tunable variables with quantified quality risk.

Encoding, transporting, and analyzing tolerances in CAD

Semantic PMI in major platforms

Semantic PMI means GD&T is not just text and leader lines; it is machine‑readable, associatively bound to faces, edges, and features with structure that downstream applications can query. Dassault Systèmes’ CATIA V5/V6 and the 3DEXPERIENCE platform, Siemens NX, PTC Creo, SolidWorks, and Autodesk Inventor all support associative PMI whose objects carry references to geometric topology and—in more advanced implementations—feature semantics (e.g., pattern instance, hole feature, slot). ASME Y14.41 (Digital Product Definition Data Practices) and ISO 16792 codify how PMI is displayed and governed in 3D, while ASME Y14.5.1 (Mathematical Definition of Dimensioning and Tolerancing Principles) and ISO GPS backbone standards (ISO 8015, 1101, 5459, 14405, 2692) provide the math and interpretation rules. Vendors exposed PMI APIs: NXOpen for NX PMI, 3DEXPERIENCE CAA APIs for FTA (Functional Tolerancing & Annotation), Creo’s J‑Link and Toolkit for MBD, and SOLIDWORKS MBD APIs. Robust PMI authoring also depends on persistent topology strategies so that face identifiers survive operations like blends or Booleans; otherwise, associativity collapses when models update. Leaders in this area implement name‑based or geometry‑based persistence layers with exact predicates to minimize churn in small features. Over the past decade, platform GUIs evolved from 2D note editors to tolerancing workbenches that enforce standard‑compliant syntax, datum scheme validation, and feature control frame composition, shifting authoring from art to governed practice and creating data that CAM, CMM, and simulation can consume without re‑typing.

- Standards scaffolding: ASME Y14.41, ISO 16792 for DPD; ASME Y14.5.1 and ISO GPS for math/interpretation.

- Platform reach: CATIA/3DEXPERIENCE FTA, Siemens NX PMI, PTC Creo MBD, SOLIDWORKS MBD, Autodesk Inventor.

- Technical hinge: persistent topology and feature semantics maintain associativity under change.

Interoperability and standards

Semantic PMI is only as useful as its portability. STEP AP242 (“Managed Model‑Based 3D Engineering”) added rich semantic PMI to the venerable STEP ecosystem, enabling exchange of tessellated or B‑rep geometry with linked tolerances, datum features, and presentation. JT (ISO 14306) also supports PMI for visualization‑centric interoperability within Siemens‑heavy enterprises. 3D PDF, typically via PRC or U3D, delivers human‑readable MBD to suppliers who lack native CAD, preserving visualization of PMI and view states. On the metrology side, the Digital Metrology Standards Consortium (DMSC) developed QIF (Quality Information Framework), an XML‑based standard that describes product definitions, measurement plans, results, and statistics—closing the loop from CAD to inspection and SPC. Vendors specialized in reliable transformations emerged: Capvidia invested deeply in AP242 and QIF pipelines, offering MBDVidia and CompareVidia for validation and semantic fidelity checks; ITI (International TechneGroup) expanded CADfix and Proficiency to handle PMI‑rich conversions; T‑Systems Enterprise Services provided enterprise‑scale JT/STEP solutions. NIST, through MBE PMI Validation and related test suites, pushed conformance by publishing canonical models with expected PMI semantics; this disciplined vendors to improve mapping coverage and error reporting. The result is a practical, if imperfect, supply chain grammar: AP242 carries authoritative product definition; JT or 3D PDF handles lightweight consumption; QIF orchestrates measurement and feedback. The remaining friction points center on nuances—pattern semantics, datum targets, GD&T modifiers—that still vary by vendor dialect, underscoring why formal conformance testing and transformation validation are critical investments.

- Authoritative exchange: STEP AP242 for semantic PMI; Edition 2 broadened coverage and configuration management.

- Lightweight visualization: JT with PMI and 3D PDF for broad consumption.

- Metrology thread: QIF (DMSC) for plans, results, and SPC interoperability.

- Validation: Capvidia, ITI/CADfix, T‑Systems, plus NIST test suites to raise semantic fidelity.

Core algorithms and methods

Under the hood, tolerance computation is geometry and probability. Feature/datum graph construction encodes functional relationships: nodes as features (holes, bosses, slots), edges as constraints (datums, joints), with weights representing tolerance zones and process capabilities. Tolerance zones are realized via offsets and Minkowski sums: e.g., a cylindrical positional zone is a radius‑offset of the true axis; flatness becomes an offset pair of parallel planes. Stack‑up engines offer worst‑case (algebraic sum of maxima), root sum square (RSS under independence), and Monte Carlo simulation (sampling from measured or assumed distributions). For assemblies, linearized sensitivity matrices approximate how small deviations propagate through kinematics; these Jacobians enable fast trade studies before committing to computationally expensive Monte Carlo across hundreds of contributors. Robustness tactics matter: interval arithmetic bounds uncertainty rigorously when distributions are unknown; exact predicates (à la Shewchuk) avoid topological flakiness when near‑coincident entities are compared; persistent topology schemes keep PMI attached across topological editing. In software like VSA, CETOL 6σ, and 3DCS, these methods surface as design‑for‑variation dashboards: red bars for dominant contributors, slider‑based sensitivity to datum re‑assignment, and side‑by‑side comparisons of MMC‑enabled tolerance relaxations versus process capability investments. Beyond engines, kernels must manage unit consistency, datum reference frame mobility (primary‑secondary‑tertiary), and ambiguous modifier combinations. Increasingly, platforms augment deterministic stacks with production data: importing Q‑DAS or QIF results to replace nominal process spreads with observed distributions, turning simulation into model‑calibrated prediction rather than assumption‑driven extrapolation.

- Geometric core: offsets and Minkowski sums to construct tolerance zones.

- Propagation: worst‑case, RSS, Monte Carlo, and linearized sensitivity matrices.

- Numerics: interval arithmetic, exact predicates, and persistent topology for robustness.

Tolerance‑aware CAM and the rise of closed‑loop precision

From PMI to machining decisions

For decades, CAM programmers manually interpreted drawings to pick strategies, tools, stepover, and “stock to leave.” With semantic PMI, that interpretation can be automated. Siemens NX Tolerance‑Based Machining (TBM) and Dassault DELMIA’s Tolerance‑Based Machining read GD&T to map faces into machining features and assign strategies: tighter profile tolerances drive smaller stepovers, different scallop heights, and extra rest‑finishing passes; location tolerances influence datum setups and probing cycles; surface finish callouts bias tool selection and cutting parameters. Feature‑based CAM systems—Mastercam, OPEN MIND hyperMILL, Autodesk Fusion 360, and Hexagon ESPRIT—are increasingly PMI‑aware, using hole callouts and geometric controls to select drills versus thread mills, enable helical entries, or reserve material for post‑machining datum alignment. The payoff is twofold: first, reduction in programming variability and rework; second, creation of a digital provenance from PMI to NC code that can be audited. In practice, TBM frameworks build rulesets: “If profile ≤ X and material = Y, then stepover = f(Ra target); if position ≤ Z at MMC, then include on‑machine probing and compensation.” OEMs encode playbooks once and propagate across part families, finally letting the tolerance budget steer cutter path economics in a repeatable, reviewable way.

- Associative mapping: PMI‑to‑feature recognition drives strategy, tool, and stepover.

- Process rules: tolerance thresholds trigger probing, extra passes, or alternate tools.

- Governance: digital trace from PMI to NC for audit and reuse.

Machine, tool, and process compensation

Even perfect toolpaths meet imperfect machines, tools, and environments. Controller ecosystems—FANUC, Siemens SINUMERIK, and Heidenhain—provide a suite of compensations that make tolerance‑aware CAM actionable on the shop floor. Classical cutter compensation (G41/G42) is table stakes; more advanced features include Tool Center Point (TCP) control for 5‑axis accuracy, kinematic calibration of rotary axes, and volumetric error maps that compensate for geometric link errors across the workspace. Renishaw’s calibration stack (e.g., QC20‑W ballbar for circularity checks; XL‑80 laser for linear positioning errors; XM‑60 multi‑axis system) and Blum‑Novotest solutions quantify machine behavior; controllers then ingest these measurements to update parameters or tables. Thermal models and tool wear tracking (via sister tooling or in‑process probing) feed offsets so that drifting processes stay inside tolerance zones without scrapping parts. CAM rules can emit NC code that toggles high‑precision modes only when PMI requires it, balancing cycle time and quality. The emergence of “digital twins of the machine tool,” e.g., Siemens’ SINUMERIK Integrate or Heidenhain’s Digital Shop Floor, helps planners validate that programmed compensations, kinematics, and probing cycles achieve the targeted geometric controls before chips fly, tightening the closed loop from PMI to in‑cut behavior.

- Controller features: G41/G42, TCP, RTCP, kinematic calibration, volumetric error compensation.

- Calibration: Renishaw QC20‑W, XL‑80/XM‑60; Blum‑Novotest laser and probe systems.

- Process models: thermal drift and tool wear offsets invoked by tolerance thresholds.

In‑process measurement and feedback

On‑machine probing transformed inspection from a post‑hoc gate to an in‑cycle control. Renishaw OMP and RMP families (optical and radio transmission) enable automatic workpiece location, datum setting, and feature measurement; results compare against PMI‑derived tolerances, and CAM postprocessors branch to re‑machine, adjust wear offsets, or flag operator intervention. Inspection software—Hexagon PC‑DMIS, InnovMetric PolyWorks|Inspector, Metrologic Group’s Metrolog X4, and Zeiss Calypso—consumes PMI (native or AP242) to generate measurement programs, reducing programming effort and ensuring that the measured features and evaluators match design intent. Increasingly, QIF‑based workflows capture the plan (QIF‑Plan), execution (QIF‑Results), and statistics (QIF‑Statistics), closing the information loop and enabling traceable nonconformance reports. SPC systems like Q‑DAS (qs‑STAT) and Mitutoyo MeasurLink aggregate capability data (Cp, Cpk), which PLM backbones—Siemens Teamcenter and Dassault 3DEXPERIENCE—can feed upstream to design and variation analysis. In this feedback loop, tolerances become measurable commitments, not theoretical bounds: when Cpk erodes, engineers adjust either process parameters (feeds/speeds, compensation) or the tolerance allocation itself, captured in change management with digital signatures. The effect is a living tolerance model governed by data, not an archival note gathering dust.

- Probing loop: measure against semantic PMI, then auto‑correct or re‑cut.

- Inspection automation: PC‑DMIS, PolyWorks|Inspector, Metrolog X4, Calypso generate programs from PMI.

- SPC and thread: QIF + Q‑DAS unify plans, results, and capability back into PLM.

Additive and hybrid realities

Additive manufacturing (AM) stresses conventional tolerance models. Thermal gradients, scan strategies, and support topologies introduce build distortions and shrinkage that must be predicted and compensated. Materialise Magics, Autodesk Netfabb, and EOS build preparation toolchains implement distortion compensation: pre‑deforming input geometry so that the printed part relaxes into tolerance; scan‑to‑compare workflows evaluate in‑process or post‑build 3D scans against PMI zones to steer adaptive contouring or targeted machining. Lattice and internal channels complicate verification—surface‑based GD&T struggles when functional geometry is volumetric or inaccessible. Here, emerging approaches couple PMI with process‑based quality signatures (melt pool monitoring, acoustic emission) to infer conformity. Hybrid machines that combine directed energy deposition (DED) or powder bed fusion with milling—e.g., DMG MORI LASERTEC 65 3D hybrid—require bidirectional tolerance handoffs: additive stages build near‑net with allowances encoded from PMI; subtractive stages reference datums and finish to profile and location. CAM must juggle work coordinate systems, update stock models from in‑process scans, and select finishing cutters aligned with the tolerance budget. STEP AP242 and QIF are expanding to represent AM‑specific intent (build orientation, support regions) and inspection, but semantic completeness is still evolving. Nonetheless, the same pattern holds: tolerances are becoming executable constraints across AM/CM hybrids, not static annotations.

- Compensation: scan‑to‑compensate and pre‑deformation align AM parts to tolerance.

- Verification: internal lattices push beyond surface‑only GD&T; monitoring augments inspection.

- Hybrid flow: additive allowances and subtractive finishing guided by one tolerance model.

Conclusion

What changed

The most important shift is conceptual: tolerances left the realm of documentation and entered computation. A drawing symbol once told a machinist “be careful here.” Today, that same requirement exists as semantic PMI bound to faces, transported via STEP AP242, consumed by CAM to pick strategies, invoked by controllers to enable compensation, and tested by metrology software that generates programs directly from the design. SPC systems then numerically score process capability and push that signal back into design and variation analysis. The chain is not merely digital; it is feedback‑rich. This re‑casts tolerances as economic levers: tighten here and pay with cycle time and tool wear; relax there and reclaim yield. Toolmakers, software firms, and standards bodies collectively engineered this evolution: Dassault, Siemens, PTC, Autodesk, Hexagon, Zeiss, Renishaw, Capvidia, ITI, DMSC, and NIST each supplied building blocks. What was once a title‑block afterthought is now a first‑class driver of decisions from concept to certificate of conformance, measurable at every hop. The organization that internalizes this fact treats tolerances as code: authored with syntax, checked with tests, versioned under change control, and executed on machines.

- From note to executable constraint spanning CAD, CAM, QA.

- From one‑way release to closed‑loop governance with SPC and variation analysis.

- From tacit knowledge to codified playbooks embedded in software and controllers.

What’s hard

Three stubborn problems remain. First, semantic completeness across vendors is not solved: PMI dialects diverge on edge cases—pattern semantics, associativity under feature suppression, datum target behaviors, and modifier nuances—leading to silent downgrades during translation. Even inside a single platform, persistent topology under heavy remodeling can break links, orphaning PMI and undermining trust. Second, variation models are still too deterministic. Worst‑case and RSS are useful, Monte Carlo is better, but few kernels natively represent process variation probabilistically in geometry operations; linearizations fail when assemblies explore large motions or contact transitions. Third, scalable adoption for SMEs is difficult: building rulesets for tolerance‑based machining, configuring QIF workflows, and investing in calibration metrology exceed the budgets and skills of small shops. Validation is also under‑recognized work: without routine AP242/QIF conformance checks—Capvidia‑style comparisons and NIST test suites—organizations accumulate technical debt in silent data errors. Finally, hybrid AM/CM complicates everything: measurement access, internal features, and evolving AM semantics stretch the classical GD&T toolbox; standard bodies and vendors are racing to keep pace without fracturing the language.

- Interoperability gaps: edge‑case PMI semantics and associativity under change.

- Modeling limits: weak probabilistic support in geometry kernels and simulation.

- Adoption friction: ruleset authoring, calibration culture, and validation discipline.

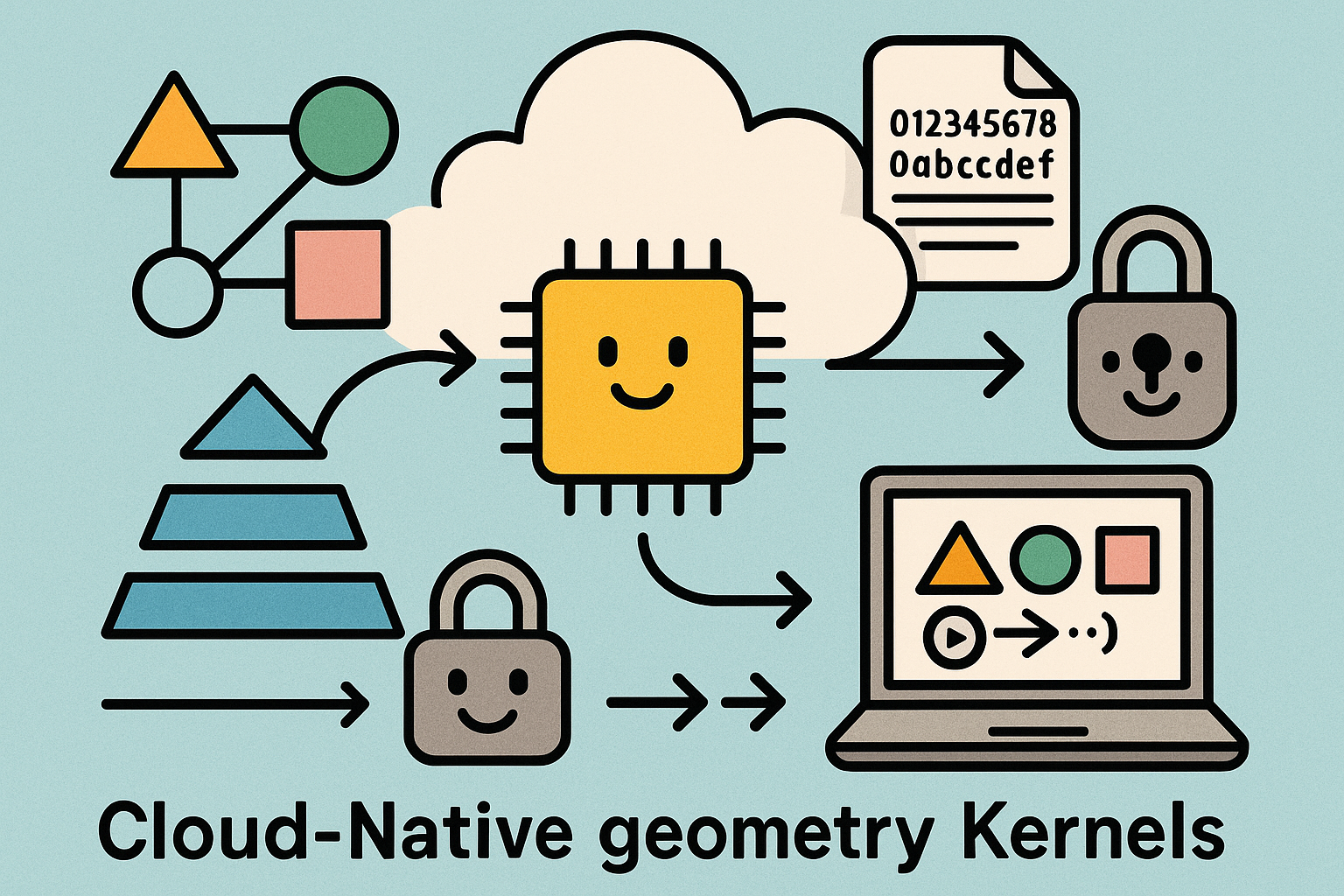

What’s next

The near horizon is pragmatic: tighter AP242 Edition 2+ and QIF conformance across major CAD, CAM, and metrology platforms, aided by richer NIST test suites and automated validation in PLM pipelines. Expect PMI quality gates to become as routine as CAD check‑in. In execution, real‑time ML‑driven adaptive control will migrate from demos to production: learning controllers will fuse probe data, spindle load, thermal models, and vibration signatures to nudge feeds, speeds, and tool paths to protect tolerance while minimizing cycle time. On the design side, research in probabilistic CAD will take shape: designers will specify not just nominal geometry and tolerances but desired confidence in function; kernels will propagate distributions, not just intervals, through operations and assemblies. For manufacturing mixes, unified tolerance strategies will span hybrid AM/CM, encoding allowances, inspection access, and post‑processing as first‑class intent. And for SMEs, lighter footprints—cloud‑hosted variation analysis, template PMI checkers, and wizard‑driven TBM—will lower barriers. The destination is a fully closed‑loop, tolerance‑aware digital thread where every agent—designer, programmer, operator, inspector, auditor—sees the same computable intent, and every decision leaves a measurable trace back to the original requirement.

- Standardization: broadened AP242/QIF fidelity and automated conformance gates.

- Execution: ML‑assisted adaptive machining driven by in‑cycle sensing.

- Design: probabilistic CAD for uncertainty‑aware decisions.

- Manufacturing: unified tolerance playbooks for AM/CM hybrids and SMEs.

Also in Design News

Cloud‑Native Geometry Kernels: Content‑Addressable Shape Graphs, Determinism, and Progressive Streaming APIs

March 29, 2026 12 min read

Read More

Revit Tip: Profile Family Standards for Revit Sweeps and Reveals

March 29, 2026 2 min read

Read MoreSubscribe

Sign up to get the latest on sales, new releases and more …