Your Cart is Empty

Customer Testimonials

-

"Great customer service. The folks at Novedge were super helpful in navigating a somewhat complicated order including software upgrades and serial numbers in various stages of inactivity. They were friendly and helpful throughout the process.."

Ruben Ruckmark

"Quick & very helpful. We have been using Novedge for years and are very happy with their quick service when we need to make a purchase and excellent support resolving any issues."

Will Woodson

"Scott is the best. He reminds me about subscriptions dates, guides me in the correct direction for updates. He always responds promptly to me. He is literally the reason I continue to work with Novedge and will do so in the future."

Edward Mchugh

"Calvin Lok is “the man”. After my purchase of Sketchup 2021, he called me and provided step-by-step instructions to ease me through difficulties I was having with the setup of my new software."

Mike Borzage

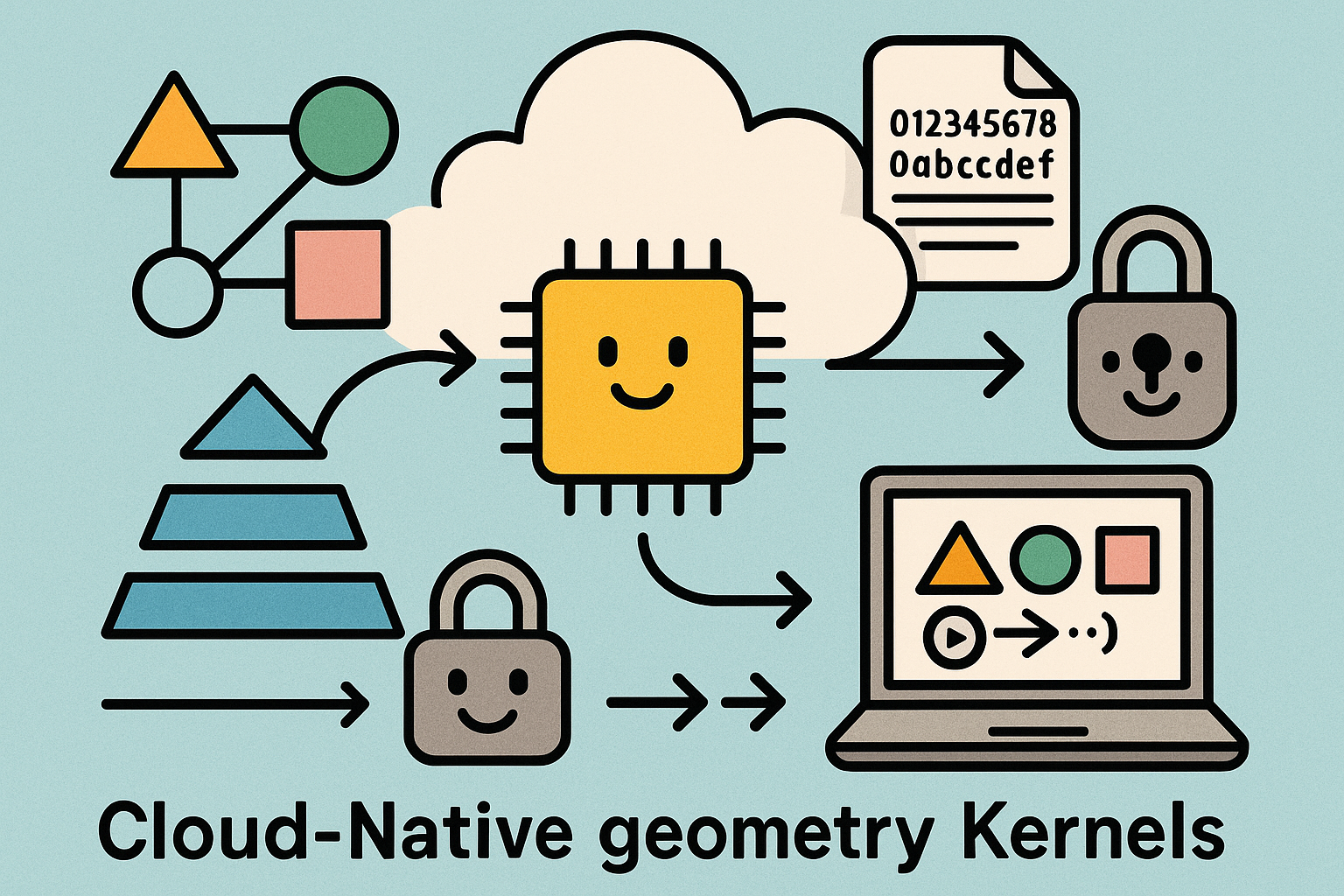

Cloud‑Native Geometry Kernels: Content‑Addressable Shape Graphs, Determinism, and Progressive Streaming APIs

March 29, 2026 12 min read

Opening context for cloud‑native geometry kernels

Why this moment matters

Cloud platforms have matured to the point where geometry computation can finally behave like any other large‑scale data service: elastic, observable, and reproducible. Yet geometric kernels bring unique constraints—exactness at decision boundaries, topology invariants, and multi‑representation interoperability—that do not neatly map to generic microservice patterns. The opportunity is to treat **cloud‑native geometry kernels** as streamed computation graphs, not monoliths hidden behind a procedural API. This shift moves value from single‑workstation determinism to fleet‑level determinism, where compute, storage, and observability are designed as one system. The practical payoff is significant: scalable Booleans on city‑scale models, progressive product visualization, and reproducible engineering computation that can cross devices and teams without corrupting tolerances or intent. The following sections outline a blueprint: how to architect services that preserve referential integrity, how to scale algorithms across heterogeneous compute, and how to keep numerical robustness uncompromised while still delivering throughput. The end goal is modest but powerful—encode tolerances and exactness boundaries in the API surface so downstream applications can reason about results, and make determinism and streaming first‑class features rather than documentation footnotes.

What “minimum introduction” implies for practitioners

Practitioners do not need abstract promises; they need building blocks that cleanly separate evaluation, topology, meshing, interrogation, and healing, without losing the semantic links that make models meaningful over time. The architecture should allow one team to ship a new filleting operator without destabilizing meshing semantics, and should allow visualization to start before topology is finalized. If there is a single mental model to carry forward, it is this: encapsulate geometry as a **content‑addressable shape graph** with immutable history, then let specialized services transform that graph under strict contracts for tolerances, determinism, and streaming. Everything else—autoscaling, heterogeneous compute, and progressive delivery—flows naturally from that premise.

Architectural foundations for cloud‑native geometry kernels

Service decomposition and persistent shape graphs

A durable architecture begins by decomposing the kernel into narrowly scoped services that each transform or evaluate the shape graph under explicit contracts. At a minimum, separate services handle: curve and surface evaluation; topology operations such as Booleans, offsets, and fillets; meshing and tessellation; interrogation for mass properties and feature queries; and validation and healing. Each service remains stateless with respect to compute and uses a persistent, content‑addressable store to read and write geometry. By encoding geometry as **content‑addressable “shape graphs”**—where nodes are canonical definitions (e.g., trimmed NURBS faces, edges with parameter ranges) and edges record topological relationships—you gain immutable history, deduplication, and referential integrity across microservices. This store functions like a Git for shapes: a node hash becomes a stable, shareable reference for downstream services. Stateless compute instances only hold ephemeral working sets and rely on the store for both inputs and results, which simplifies autoscaling and fault tolerance. To make this practical, attach deterministic metadata to every transformation: input references, applied operator version, tolerances used, and any non‑deterministic fallbacks. Doing so allows replay, caching, and reproducible diffs. The core advantages are clear: small, replaceable services; reproducible transformations; and the freedom to optimize each stage independently without breaking the graph’s integrity.

- Keep compute stateless; keep geometry persistent and content‑addressed.

- Use immutable nodes plus explicit transformation lineage to enable caching and diffs.

- Model microservice boundaries along evaluation, topology, tessellation, interrogation, and healing.

Portable data representations and first‑class topology

The representation that “travels well” between services and devices is a trimmed NURBS B‑Rep, because it captures industrial CAD intent and allows exact parametric re‑evaluation. Make that the spine of the shape graph. Around it, attach optional layers: implicit fields or signed distance functions for robust CSG and offsetting, and voxel or sparse‑grid representations for large‑scale sampling, rasterization, and physics coupling. Crucially, treat both **manifold and non‑manifold topologies** as first‑class citizens to support sheet metal, weldments, lattice structures, and feature editing workflows. The data contracts should define ownership of parameters (e.g., surface domains, trimming loop parameterizations, knot vectors) and normalize units at ingest. When using hybrids—NURBS plus SDF or voxels—define conversion boundaries explicitly: for example, prefer arrangement‑based Booleans in param space for analytic surfaces, but switch to SDF blending for fillets on freeform intersections where robustness dominates. Finally, design the B‑Rep to carry tolerances and semantic tags (feature IDs, design intent markers, manufacturing notes), because these tags inform downstream canonicalization, meshing resolution, and interrogation strategies. In effect, the representation becomes a layered record where exactness and approximations co‑exist under stated contracts, letting you switch compute modes without losing fidelity or intent.

- Use trimmed NURBS B‑Rep as the backbone; attach implicit and voxel layers as optional views.

- Normalize units, encode tolerances, and preserve feature semantics on ingest.

- Elevate non‑manifold support to a contract to avoid lossy workarounds.

APIs that preserve intent, with streaming built in

An API that preserves modeling intent must be idempotent, canonicalize inputs, and version both operators and tolerance semantics. Every operator call should declare its semantic version and emit a deterministic hash of outputs when feasible—salted by explicit tolerances—so caches and downstream services can rely on stable identities. Define **canonicalization rules** for ordering of edges, loop orientations, and parameter ranges to reduce superficial diffs. Make operations idempotent by detecting and returning prior results given the same inputs and contract. For long‑running transforms, return resumable tickets; support gRPC or HTTP chunked responses for progressive tessellation so visualization can start immediately with coarse results. Complement this with push‑based notifications and dependency tracking: when an upstream face is updated, send invalidation or refresh signals to dependent caches (meshes, mass properties), alongside new tickets for re‑computation. This explicit streaming stance converts latency into staged value delivery: quick previews, followed by refined topology and watertight meshes. Importantly, enforce **semantic versioning** not just for API signatures but also for tolerance behavior—changes to snap rounding or trimming thresholds should bump operator versions so reproducibility and audits remain trustworthy across the fleet.

- Idempotent operations with deterministic hashing guard caches from drift.

- Chunked streaming enables progressive tessellation and early visualization.

- Push‑based invalidation keeps dependent caches coherent as geometry evolves.

Performance and scalability patterns

Algorithmic scaling and adaptive pipelines

Performance begins with algorithmic choices that respect geometry’s spatial structure. Index curves, surfaces, and faces with spatial partitioning such as BVH, k‑d, or AABB trees, tuned per operator. For Booleans, arrangement‑based methods with conflict‑directed subdivision limit expensive intersection tests to localized regions, improving both robustness and throughput. For surface operations—trimming, offsets, projections—use parameter‑space tiling and adaptive refinement based on curvature and local feature scale, which avoids global over‑refinement. In visualization and interrogation pipelines, adopt **adaptive LOD**: generate coarse meshes first, refine on demand near silhouettes, tight curvature, or user‑selected features. Combine param‑space and object‑space error metrics so tessellation remains watertight across shared parameterizations. Across the stack, goal‑seek for a monotonic progression: coarse‑to‑fine outputs that never regress in validity. This allows latency hiding at the product level, where previews are immediately usable and progressively enriched. Finally, couple these strategies with precomputed adjacency (face‑edge‑vertex neighborhoods) and compact SoA data layouts for hot loops; both eliminate branch mispredictions and improve cache locality during intersection and tessellation, which is often the dominant cost in large assemblies.

- Build per‑operator spatial indices; prefer arrangement‑based Booleans with subdivision.

- Use param‑space tiling for trimming/offsets and adaptive LOD for tessellation.

- Guarantee monotonic refinement: each stage increases fidelity without breaking validity.

Heterogeneous compute and vectorization

Modern kernels should opportunistically target CPU, GPU, and portable SIMD to capture performance without sacrificing coverage. On CPUs, implement AVX‑512, NEON, and SVE vector paths for curve evaluation, interval arithmetic, and ray queries; fall back to scalar with identical rounding semantics. For GPUs, deploy CUDA, HIP, or Vulkan compute kernels for tessellation, ray casting, broad‑phase collision, and voxelization. Design kernels to operate over SoA buffers and compact index streams so they saturate memory bandwidth and minimize divergence. For portability and security, maintain **WASM‑SIMD** fallbacks that run in sandboxed contexts—ideal for plugin ecosystems and client‑side previews. Critical to this approach is numerical alignment: fix IEEE‑754 modes (FTZ/DAZ), control FMA contraction, and enforce deterministic reductions so parallel and scalar paths match within stated tolerances. Scheduler‑wise, bind operators to the fattest lane available: push coarse, embarrassingly parallel tessellation to the GPU; keep small, exact predicates on the CPU to leverage higher precision and lower kernel launch overheads. This hybrid stance gives robust latency distributions across shapes of wildly different complexity while preserving correctness. The practical guidance: optimize a small set of kernels deeply (curve–surface intersection, parametric evaluation, signed distance sampling), expose them through multiple backends, and gate selection via capabilities signaled at runtime.

- Exploit CPU SIMD for evaluation and predicates; offload tessellation and rays to the GPU.

- Offer WASM‑SIMD for portable, sandboxed execution without native install friction.

- Unify numerical modes across backends to avoid backend‑dependent geometry changes.

Memory, I/O, and latency hiding

Geometry workloads are memory‑bound more often than they are compute‑bound. Use zero‑copy binary protocols end‑to‑end so shape graphs and operator inputs move without serialization overhead; protobufs with direct buffers or flatbuffers can help but avoid deep pointer graphs that defeat DMA and cache prefetching. Structure data as SoA for hot loops and pack small structs (e.g., 16‑byte aligned parametric ranges) to fit vector widths. On large nodes, adopt NUMA‑aware allocators and thread pinning to keep operator working sets local. For massive assemblies, stage immutable geometry in object stores like S3 or GCS with local NVMe scratch for hot subsets, and implement out‑of‑core streaming: load tiles on demand, evict deterministically, and preserve adjacency metadata. To hide latency, deliver **coarse‑to‑fine progressive results**: speculative precomputation for dependent faces, cancellable tasks when users alter parameters, and JIT/PGO to accelerate hotspot operators such as intersection and trimming. Combine this with ticketed long‑running ops so UX can poll or subscribe to milestones—coarse mesh ready, watertight mesh ready, mass properties validated. The interplay of memory discipline and incremental delivery yields responsive pipelines even when the underlying kernels are doing heavyweight exact computations.

- Prefer zero‑copy binary protocols and SoA layouts to reduce serialization and cache misses.

- Stage in object stores, cache on NVMe, and stream out‑of‑core with deterministic eviction.

- Expose progressive milestones and cancellable tasks to convert compute time into value time.

Cost controls for multi‑tenant clouds

Performance without cost control is unsustainable. Use autoscaling with bin‑packing schedulers that understand operator footprints (CPU, GPU, memory, bandwidth) to keep nodes well utilized. Enforce SLO‑aware queues per tenant, separating interactive latency‑critical requests from background batch re‑tessellations. Partition GPUs via MPS or MIG and cap per‑tenant GPU hours; for bursty workloads, hedge with spot instances but checkpoint frequently and tolerate preemption through resumable tickets. At the operator level, allow **graceful degradation**: if budgets are exhausted, switch to approximate queries (e.g., coarser voxel SDF for collision, reduced LOD tessellation) while flagging outputs with provenance and quality levels. Instrument each operator for cost per unit geometry (per face, per million triangles) and publish dashboards that correlate tolerance choices with cost—this ties engineering decisions to spend directly. Finally, throttle pathological requests by complexity metrics (e.g., self‑intersections suspected, sliver faces detected) and return advice to the caller: suggested tolerance relaxations, feature cleanups, or topology simplifications. These measures keep shared clusters healthy, predictable, and fair without compromising correctness for users who pay for exactness.

- Bin‑pack by operator footprint; separate interactive and batch queues.

- Use GPU partitioning and spot hedging with resumable, checkpointed tasks.

- Degrade gracefully with provenance when budgets or SLOs require approximations.

Portability and numerical robustness without compromise

Reproducibility through deterministic math and stable orderings

Reproducibility across hardware is non‑negotiable when geometry underpins engineering decisions. Fix IEEE‑754 modes explicitly—enable FTZ/DAZ where appropriate, control FMA contraction, and choose compiler flags per target so scalar, SIMD, and GPU paths conform to the same rounding expectations. For reductions (areas, volumes, centroid integrals), use deterministic schemes: pairwise or Kahan‑compensated summations with fixed traversal orders so parallel runs reproduce bit‑for‑bit within tolerances. In parallel kernels, adopt stable work partitionings and deterministic tie‑breakers for events like edge‑edge intersections arriving simultaneously. Publish a backend capability matrix and test suite that locks down acceptable numeric differences; when differences exceed a threshold, tag results as non‑deterministic and attach predicate traces. Determinism should extend to hashing: combine structure hashes with operator versions and tolerance scalars to yield portable IDs. This steadfast control over math modes and orderings arrests the drift that otherwise plagues multi‑backend geometry services, turning the fleet into a single, predictable machine rather than a probabilistic ensemble.

- Standardize FP modes and compiler flags per target; document and enforce them in CI.

- Use deterministic, compensated reductions and stable parallel partitionings.

- Version and hash results with operator semantics and tolerance salts for portability.

Robust predicates, exactness where it matters, and disciplined tolerances

Robustness hinges on making correct topological decisions even when coordinates flirt with degeneracy. Use filtered predicates—orient, insphere, and interval‑arithmetic guards—to cheaply classify most cases and escalate to exact rationals or algebraic numbers at decision boundaries. Maintain **interval arithmetic guard bands** through pipelines so later stages know where uncertainty lies. Complement predicates with topology‑preserving snap rounding that converts near‑coincident features into consistent embeddings without violating the Euler–Poincaré constraints. Tolerances deserve first‑class status: model absolute and relative tolerances explicitly, normalize units, and make feature‑scale aware choices for offsets and shells so thin features do not evaporate. For NURBS, deploy reparameterization and knot insertion to maintain continuity during edits; detect slivers and saddles proactively and surface remediation strategies (rebalance knots, rebuild spans, or local refits). The aim is selective exactness: spend exact arithmetic only where topology could flip, keep everything else in fast filtered or interval regimes. Encode these decisions in API responses so downstream consumers can reason about risk and provenance instead of treating geometry as equally trustworthy everywhere.

- Filter with intervals, escalate to exact arithmetic at decision boundaries only.

- Declare and propagate model tolerances; adapt operations to feature scale.

- Apply snap rounding that preserves topology and respects manifold contracts.

Watertight outputs with consistent trimming and validation

Producing watertight meshes and valid solids is as much about shared parameter space as it is about triangle counts. When tessellating adjacent faces, sample along shared parametric isocurves and enforce identical splitting so boundary vertices coincide exactly. Enforce **consistent trimming**: evaluate trimming loops with the same adaptive rules used for face tessellation to avoid stitch gaps, and align normals across seams with orientation checks. After meshing, run Euler–Poincaré checks, volume/sign tests for solids, and manifold validation reporting nonmanifold edges, inverted normals, or zero‑area facets. For B‑Reps, verify that loop orientations, edge usages, and vertex valences satisfy topological constraints; for hybrid SDF conversions, validate that extracted isosurfaces match original volumes within tolerance. When repairs are needed, apply localized strategies: merge near‑duplicate vertices under tolerance, retriangulate small patches with error‑bounded Delaunay, and rebuild trims against reparameterized surfaces. Report all fixes in a provenance block so downstream steps can decide whether to accept approximations. The output contract is simple but strict: watertightness, correct orientation, and topological consistency, with violations surfaced before the data leaves the service boundary.

- Share param‑space sampling along seams to keep tessellation watertight.

- Validate with Euler–Poincaré, volume/sign tests, and manifold checks.

- Repair locally, record provenance, and keep global topology intact.

Testing, observability, and security in multi‑tenant environments

At scale, correctness is a property you continuously measure. Use property‑based fuzzing that targets near‑degenerate configurations—coplanar faces, near‑tangent intersections, sliver loops—and maintain a curated corpus of adversarial models. Apply differential testing against multiple kernels and use metamorphic invariants: rigid transforms, parameter re‑ordering, or unit scaling should not change topological outcomes within stated tolerances. For privacy‑preserving repros, emit **predicate traces** and interval bound logs over encrypted geometry identifiers, enabling remote debugging without IP leakage. Observability should expose per‑operator latency histograms, error rates by predicate escalation level, and cache hit ratios keyed by deterministic hashes. Finally, secure the multi‑tenant surface: sandbox plugins or user‑defined ops in WASM with strict memory/CPU quotas, adopt zero‑trust per‑request signing, and produce selective‑disclosure logs that let trusted engineers diagnose failures without exposing models. These measures transform geometry computation from a black box into an instrumented, safe utility where regressions are caught early, reproducibility is enforced, and user data remains protected.

- Fuzz near‑degenerate cases; enforce metamorphic invariants through CI.

- Log predicate and interval decisions for privacy‑preserving repros.

- Sandbox extensions with WASM and zero‑trust request paths; limit resources per tenant.

Conclusion

Principles to internalize for cloud‑native geometry

Cloud‑native geometry kernels can deliver elasticity and reach, but only when algorithmic and systems choices co‑evolve. Treat the model as a **reproducible, streamed computation graph** anchored by content‑addressable nodes; build services that transform this graph under explicit tolerance and version contracts; and ship progressive results as a feature, not a workaround. Determinism and observability should be product features: stable hashes, operator semantic versions, and predicate traces build trust and unlock caching at scale. Representations will be hybrid by default—trimmed NURBS for intent, SDFs for robust CSG and offsets, voxels for sampling and analysis—with both manifold and non‑manifold topologies elevated to first‑class status. Portability requires disciplined numerical baselines; robustness requires filtered predicates and exactness at boundaries; performance requires heterogeneous compute and adaptive algorithms that respect param‑space structure. When stitched together, these principles convert geometry from a monolithic library call into a fleet‑coordinated service that scales with teams, datasets, and devices without eroding correctness or intent.

- Anchor on shape graphs and immutable history; keep compute stateless and streaming.

- Make determinism and tolerance semantics visible at the API boundary.

- Use hybrid representations to balance exactness, robustness, and throughput.

Pragmatic path forward and near‑term advantages

Start pragmatically. Isolate hot loops—curve–surface intersections, trimming evaluations, distance queries—and add filtered predicates with interval guard bands. Introduce progressive tessellation in visualization clients and wire resumable tickets into long‑running topology ops. Establish tolerance discipline: normalize units, record absolute and relative tolerances at ingest, and salt all hashes with these values. Stand up differential tests against a second kernel and add metamorphic checks to CI so regressions surface as soon as they appear. From a compute perspective, pilot GPU tessellation and CPU‑vectorized evaluation while keeping **WASM‑SIMD** fallbacks for safe plugin ecosystems. For cost and reliability, bin‑pack workloads by operator footprint, hedge with spot instances behind checkpoints, and degrade gracefully with provenance when budgets demand it. The near future favors hybrid representations, heterogeneous compute, and shared robustness benchmarks. Teams that treat geometry as a **reproducible, streamed computation graph**—observable, hash‑addressed, and progressively deliverable—will iterate faster, scale farther, and set the pace for product visualization, additive manufacturing preparation, and engineering computation in the cloud.

- Isolate and optimize hot kernels; add filtered predicates first.

- Ship progressive results early; wire tickets and cache invalidation.

- Harden with differential and metamorphic testing before scaling out.

- Exploit heterogeneous compute while enforcing shared numeric baselines.

Also in Design News

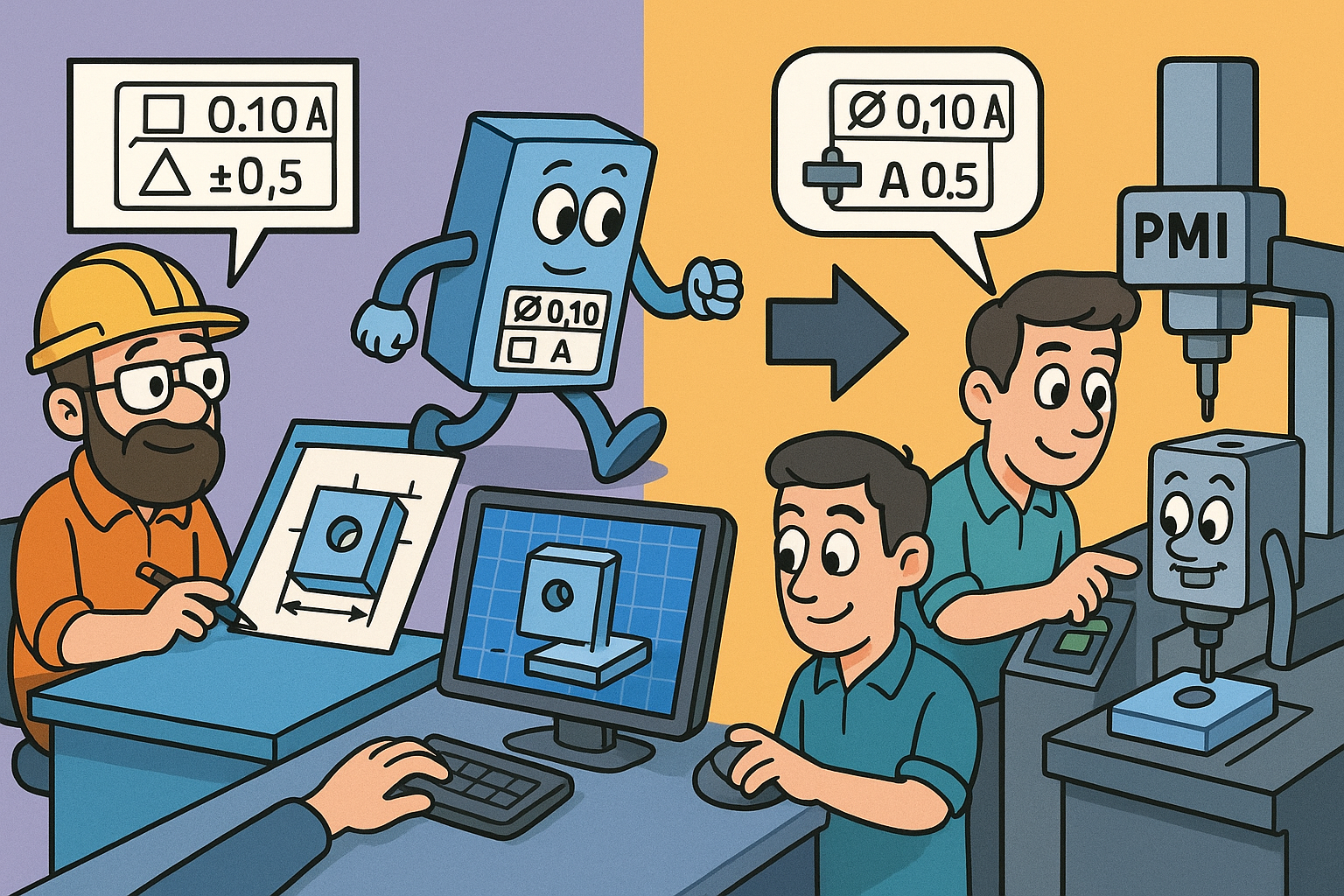

Design Software History: From GD&T to Executable PMI: The Evolution of Tolerances in CAD, CAM, and Metrology

March 29, 2026 13 min read

Read More

Revit Tip: Profile Family Standards for Revit Sweeps and Reveals

March 29, 2026 2 min read

Read MoreSubscribe

Sign up to get the latest on sales, new releases and more …